RANDOM POSTs

-

7 Timeless Tips to Learn Any Language in Days, Not Years

Read more: 7 Timeless Tips to Learn Any Language in Days, Not Yearshttp://www.lifehack.org/305085/7-timeless-tips-learn-any-language-days-not-years

the 75 most common words make up 40% of occurrences

the 200 most common words make up 50% of occurrences

the 524 most common words make up 60% of occurrences

the 1257 most common words make up 70% of occurrences

the 2925 most common words make up 80% of occurrences

the 7444 most common words make up 90% of occurrences

the 13374 most common words make up 95% of occurrences

the 25508 most common words make up 99% of occurrences

-

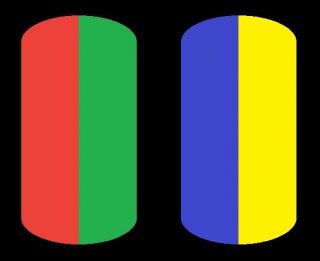

The Forbidden colors – Red-Green & Blue-Yellow: The Stunning Colors You Can’t See

Read more: The Forbidden colors – Red-Green & Blue-Yellow: The Stunning Colors You Can’t Seewww.livescience.com/17948-red-green-blue-yellow-stunning-colors.html

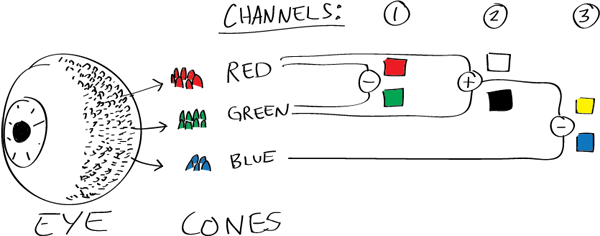

While the human eye has red, green, and blue-sensing cones, those cones are cross-wired in the retina to produce a luminance channel plus a red-green and a blue-yellow channel, and it’s data in that color space (known technically as “LAB”) that goes to the brain. That’s why we can’t perceive a reddish-green or a yellowish-blue, whereas such colors can be represented in the RGB color space used by digital cameras.

https://en.rockcontent.com/blog/the-use-of-yellow-in-data-design

The back of the retina is covered in light-sensitive neurons known as cone cells and rod cells. There are three types of cone cells, each sensitive to different ranges of light. These ranges overlap, but for convenience the cones are referred to as blue (short-wavelength), green (medium-wavelength), and red (long-wavelength). The rod cells are primarily used in low-light situations, so we’ll ignore those for now.

When light enters the eye and hits the cone cells, the cones get excited and send signals to the brain through the visual cortex. Different wavelengths of light excite different combinations of cones to varying levels, which generates our perception of color. You can see that the red cones are most sensitive to light, and the blue cones are least sensitive. The sensitivity of green and red cones overlaps for most of the visible spectrum.

Here’s how your brain takes the signals of light intensity from the cones and turns it into color information. To see red or green, your brain finds the difference between the levels of excitement in your red and green cones. This is the red-green channel.

To get “brightness,” your brain combines the excitement of your red and green cones. This creates the luminance, or black-white, channel. To see yellow or blue, your brain then finds the difference between this luminance signal and the excitement of your blue cones. This is the yellow-blue channel.

From the calculations made in the brain along those three channels, we get four basic colors: blue, green, yellow, and red. Seeing blue is what you experience when low-wavelength light excites the blue cones more than the green and red.

Seeing green happens when light excites the green cones more than the red cones. Seeing red happens when only the red cones are excited by high-wavelength light.

Here’s where it gets interesting. Seeing yellow is what happens when BOTH the green AND red cones are highly excited near their peak sensitivity. This is the biggest collective excitement that your cones ever have, aside from seeing pure white.

Notice that yellow occurs at peak intensity in the graph to the right. Further, the lens and cornea of the eye happen to block shorter wavelengths, reducing sensitivity to blue and violet light.

-

Why Streaming Content Could Be Hollywood’s Final Act

Read more: Why Streaming Content Could Be Hollywood’s Final Acthttps://www.forbes.com/sites/carolinereid/2024/10/24/why-streaming-could-be-hollywoods-final-act/

The future of Hollywood was reshaped in 1997 with the founding of Netflix, an innovative mail-order DVD rental business by Reed Hastings and Marc Randolph. Unlike traditional rentals, Netflix allowed subscribers to retain DVDs as long as they wanted but required returns before ordering more, allowing the company to collect uninterrupted subscription fees. By 2009, Netflix was shipping nearly a billion DVDs annually but had already set its sights on streaming. The transition to streaming, launched in 2007, faced initial challenges due to limited broadband availability but soon became popular, outpacing the DVD business and bringing Netflix millions of subscribers.

Netflix’s dominance drove traditional media giants to reevaluate their strategies. Disney, initially hesitant, eventually licensed its vast library to Netflix, contributing to the latter’s rise. However, by 2017, Disney pivoted to launch its own platform, Disney+, breaking its Netflix partnership and acquiring 21st Century Fox for content diversification. Disney’s decision sparked a broader industry shift as other studios also developed streaming services, aiming to retain full revenue from direct-to-consumer content instead of sharing it with theaters or traditional networks.

Disney+ quickly gained traction, especially during the pandemic, reaching millions of subscribers and temporarily boosting Disney’s stock. However, the reliance on streaming and subscriber growth strained Disney financially, with high operating costs and content expenses. Content exclusivity backfired, creating complexity for fans, particularly with interconnected Marvel shows, and contributing to user dissatisfaction. Additionally, Disney’s decision to release films like Black Widow simultaneously in theaters and on streaming led to backlash, lawsuits, and lost box office revenue, highlighting the downsides of simultaneous releases.

Facing ballooning expenses and subscriber attrition post-pandemic, Disney’s leadership returned to more traditional revenue models, emphasizing exclusive theater releases and licensing content to third parties. They also introduced cost-saving measures like job cuts and content reductions to stabilize financial losses. This shift echoes a partial return to pre-streaming industry norms as Disney and other studios explore “always-on” channels within their streaming platforms, aiming to balance direct consumer access with sustainable profit models.

-

Scratchapixel 4.0 – Free course on Computer Graphics

Read more: Scratchapixel 4.0 – Free course on Computer GraphicsTeaching computer graphics programming to regular folks. Original content written by professionals with years of field experience. We dive straight into code, dissect equations, avoid fancy jargon and external libraries. Explained in plain English. Free.

https://www.scratchapixel.com/

-

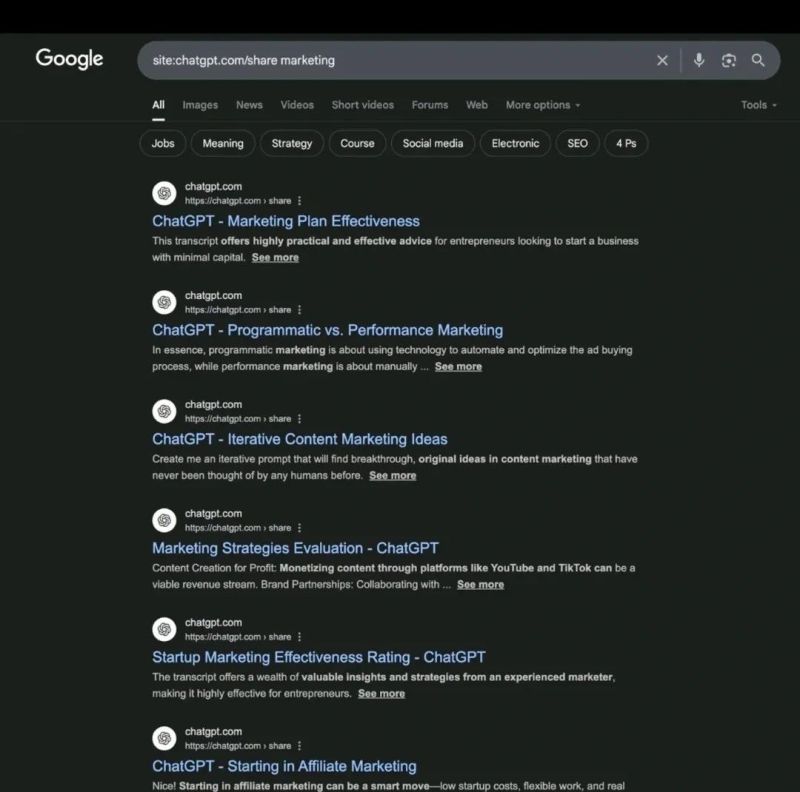

AI and the Law – 𝗬𝗼𝘂𝗿 (𝗽𝗿𝗶𝘃𝗮𝘁𝗲𝗹𝘆) 𝘀𝗵𝗮𝗿𝗲𝗱 𝗖𝗵𝗮𝘁𝗚𝗣𝗧 𝗰𝗵𝗮𝘁𝘀 𝗺𝗶𝗴𝗵𝘁 𝗯𝗲 𝘀𝗵𝗼𝘄𝗶𝗻𝗴 𝘂𝗽 𝗼𝗻 𝗚𝗼𝗼𝗴𝗹𝗲

Read more: AI and the Law – 𝗬𝗼𝘂𝗿 (𝗽𝗿𝗶𝘃𝗮𝘁𝗲𝗹𝘆) 𝘀𝗵𝗮𝗿𝗲𝗱 𝗖𝗵𝗮𝘁𝗚𝗣𝗧 𝗰𝗵𝗮𝘁𝘀 𝗺𝗶𝗴𝗵𝘁 𝗯𝗲 𝘀𝗵𝗼𝘄𝗶𝗻𝗴 𝘂𝗽 𝗼𝗻 𝗚𝗼𝗼𝗴𝗹𝗲Many users assume shared conversations are only seen by friends or colleagues — but when you use OpenAI’s share feature, those chats get now indexed by search engines like Google.

Meaning: your “private” AI prompts could end up very public. This is called Google dorking — and it’s shockingly effective.

Over 70,000 chats are now publicly viewable. Some are harmless.

Others? They might expose sensitive strategies, internal docs, product plans, even company secrets.

OpenAI currently does not block indexing. So if you’ve ever shared something thinking it’s “just a link” — it might now be searchable by anyone. You can even build a bot to crawl and analyze these.

Welcome to the new visibility layer of AI. I can’t say I am surprised…

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

-

Want to build a start up company that lasts? Think three-layer cake

-

STOP FCC – SAVE THE FREE NET

-

Glossary of Lighting Terms – cheat sheet

-

What’s the Difference Between Ray Casting, Ray Tracing, Path Tracing and Rasterization? Physical light tracing…

-

Yann Lecun: Meta AI, Open Source, Limits of LLMs, AGI & the Future of AI | Lex Fridman Podcast #416

-

Advanced Computer Vision with Python OpenCV and Mediapipe

-

Film Production walk-through – pipeline – I want to make a … movie

-

Daniele Tosti Interview for the magazine InCG, Taiwan, Issue 28, 201609

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.