BREAKING NEWS

LATEST POSTS

-

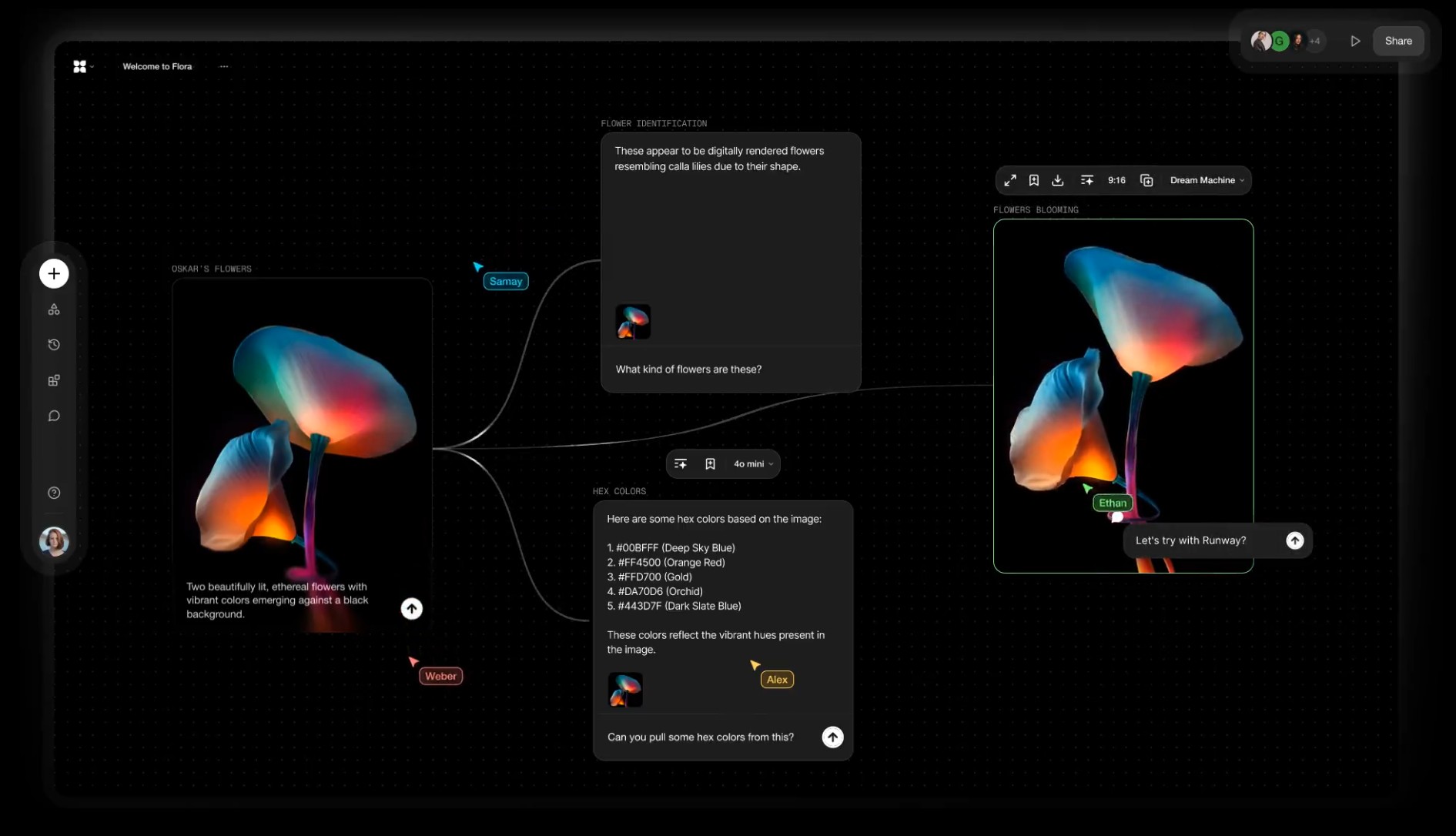

Alibaba FloraFauna.ai – AI Collaboration canvas

-

Runway introduces Gen-4 – Generate consistent elements by controlling input elements

https://runwayml.com/research/introducing-runway-gen-4

With Gen-4, you are now able to precisely generate consistent characters, locations and objects across scenes. Simply set your look and feel and the model will maintain coherent world environments while preserving the distinctive style, mood and cinematographic elements of each frame. Then, regenerate those elements from multiple perspectives and positions within your scenes.

𝗛𝗲𝗿𝗲’𝘀 𝘄𝗵𝘆 𝗚𝗲𝗻-𝟰 𝗰𝗵𝗮𝗻𝗴𝗲𝘀 𝗲𝘃𝗲𝗿𝘆𝘁𝗵𝗶𝗻𝗴:

✨ 𝗨𝗻𝘄𝗮𝘃𝗲𝗿𝗶𝗻𝗴 𝗖𝗵𝗮𝗿𝗮𝗰𝘁𝗲𝗿 𝗖𝗼𝗻𝘀𝗶𝘀𝘁𝗲𝗻𝗰𝘆

• Characters and environments 𝗻𝗼𝘄 𝘀𝘁𝗮𝘆 𝗳𝗹𝗮𝘄𝗹𝗲𝘀𝘀𝗹𝘆 𝗰𝗼𝗻𝘀𝗶𝘀𝘁𝗲𝗻𝘁 across shots—even as lighting shifts or angles pivot—all from one reference image. No more jarring transitions or mismatched details.

✨ 𝗗𝘆𝗻𝗮𝗺𝗶𝗰 𝗠𝘂𝗹𝘁𝗶-𝗔𝗻𝗴𝗹𝗲 𝗠𝗮𝘀𝘁𝗲𝗿𝘆

• Generate cohesive scenes from any perspective without manual tweaks. Gen-4 intuitively 𝗰𝗿𝗮𝗳𝘁𝘀 𝗺𝘂𝗹𝘁𝗶-𝗮𝗻𝗴𝗹𝗲 𝗰𝗼𝘃𝗲𝗿𝗮𝗴𝗲, 𝗮 𝗹𝗲𝗮𝗽 𝗽𝗮𝘀𝘁 𝗲𝗮𝗿𝗹𝗶𝗲𝗿 𝗺𝗼𝗱𝗲𝗹𝘀 that struggled with spatial continuity.

✨ 𝗣𝗵𝘆𝘀𝗶𝗰𝘀 𝗧𝗵𝗮𝘁 𝗙𝗲𝗲𝗹 𝗔𝗹𝗶𝘃𝗲

• Capes ripple, objects collide, and fabrics drape with startling realism. 𝗚𝗲𝗻-𝟰 𝘀𝗶𝗺𝘂𝗹𝗮𝘁𝗲𝘀 𝗿𝗲𝗮𝗹-𝘄𝗼𝗿𝗹𝗱 𝗽𝗵𝘆𝘀𝗶𝗰𝘀, breathing life into scenes that once demanded painstaking manual animation.

✨ 𝗦𝗲𝗮𝗺𝗹𝗲𝘀𝘀 𝗦𝘁𝘂𝗱𝗶𝗼 𝗜𝗻𝘁𝗲𝗴𝗿𝗮𝘁𝗶𝗼𝗻

• Outputs now blend effortlessly with live-action footage or VFX pipelines. 𝗠𝗮𝗷𝗼𝗿 𝘀𝘁𝘂𝗱𝗶𝗼𝘀 𝗮𝗿𝗲 𝗮𝗹𝗿𝗲𝗮𝗱𝘆 𝗮𝗱𝗼𝗽𝘁𝗶𝗻𝗴 𝗚𝗲𝗻-𝟰 𝘁𝗼 𝗽𝗿𝗼𝘁𝗼𝘁𝘆𝗽𝗲 𝘀𝗰𝗲𝗻𝗲𝘀 𝗳𝗮𝘀𝘁𝗲𝗿 and slash production timelines.

• 𝗪𝗵𝘆 𝘁𝗵𝗶𝘀 𝗺𝗮𝘁𝘁𝗲𝗿𝘀: Gen-4 erases the line between AI experiments and professional filmmaking. 𝗗𝗶𝗿𝗲𝗰𝘁𝗼𝗿𝘀 𝗰𝗮𝗻 𝗶𝘁𝗲𝗿𝗮𝘁𝗲 𝗼𝗻 𝗰𝗶𝗻𝗲𝗺𝗮𝘁𝗶𝗰 𝘀𝗲𝗾𝘂𝗲𝗻𝗰𝗲𝘀 𝗶𝗻 𝗱𝗮𝘆𝘀, 𝗻𝗼𝘁 𝗺𝗼𝗻𝘁𝗵𝘀—democratizing access to tools that once required million-dollar budgets. -

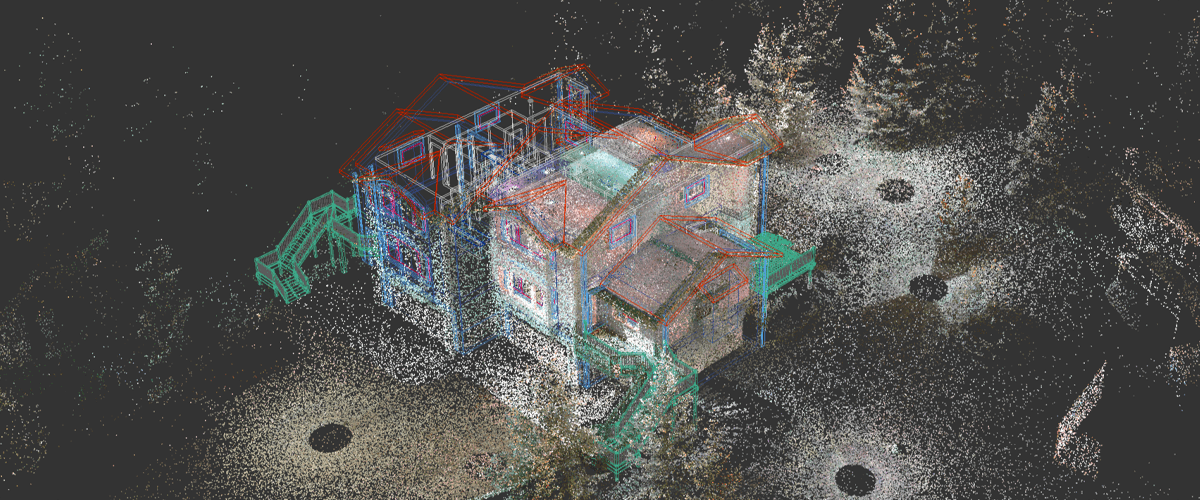

Florent Poux – Top 10 Open Source Libraries and Software for 3D Point Cloud Processing

As point cloud processing becomes increasingly important across industries, I wanted to share the most powerful open-source tools I’ve used in my projects:

1️⃣ Open3D (http://www.open3d.org/)

The gold standard for point cloud processing in Python. Incredible visualization capabilities, efficient data structures, and comprehensive geometry processing functions. Perfect for both research and production.

2️⃣ PCL – Point Cloud Library (https://pointclouds.org/)

The C++ powerhouse of point cloud processing. Extensive algorithms for filtering, feature estimation, surface reconstruction, registration, and segmentation. Steep learning curve but unmatched performance.

3️⃣ PyTorch3D (https://pytorch3d.org/)

Facebook’s differentiable 3D library. Seamlessly integrates point cloud operations with deep learning. Essential if you’re building neural networks for 3D data.

4️⃣ PyTorch Geometric (https://lnkd.in/eCutwTuB)

Specializes in graph neural networks for point clouds. Implements cutting-edge architectures like PointNet, PointNet++, and DGCNN with optimized performance.

5️⃣ Kaolin (https://lnkd.in/eyj7QzCR)

NVIDIA’s 3D deep learning library. Offers differentiable renderers and accelerated GPU implementations of common point cloud operations.

6️⃣ CloudCompare (https://lnkd.in/emQtPz4d)

More than just visualization. This desktop application lets you perform complex processing without writing code. Perfect for quick exploration and comparison.

7️⃣ LAStools (https://lnkd.in/eRk5Bx7E)

The industry standard for LiDAR processing. Fast, scalable, and memory-efficient tools specifically designed for massive aerial and terrestrial LiDAR data.

8️⃣ PDAL – Point Data Abstraction Library (https://pdal.io/)

Think of it as “GDAL for point clouds.” Powerful for building processing pipelines and handling various file formats and coordinate transformations.

9️⃣ Open3D-ML (https://lnkd.in/eWnXufgG)

Extends Open3D with machine learning capabilities. Implementations of state-of-the-art 3D deep learning methods with consistent APIs.

🔟 MeshLab (https://www.meshlab.net/)

The Swiss Army knife for mesh processing. While primarily for meshes, its point cloud processing capabilities are excellent for cleanup, simplification, and reconstruction.

-

Comfy-Org comfy-cli – A Command Line Tool for ComfyUI

https://github.com/Comfy-Org/comfy-cli

comfy-cli is a command line tool that helps users easily install and manage ComfyUI, a powerful open-source machine learning framework. With comfy-cli, you can quickly set up ComfyUI, install packages, and manage custom nodes, all from the convenience of your terminal.

C:\<PATH_TO>\python.exe -m venv C:\comfyUI_cli_install cd C:\comfyUI_env C:\comfyUI_env\Scripts\activate.bat C:\<PATH_TO>\python.exe -m pip install comfy-cli comfy --workspace=C:\comfyUI_env\ComfyUI install # then comfy launch # or comfy launch -- --cpu --listen 0.0.0.0If you are trying to clone a different install, pip freeze it first. Then run those requirements.

# from the original env python.exe -m pip freeze > M:\requirements.txt # under the new venv env pip install -r M:\requirements.txt -

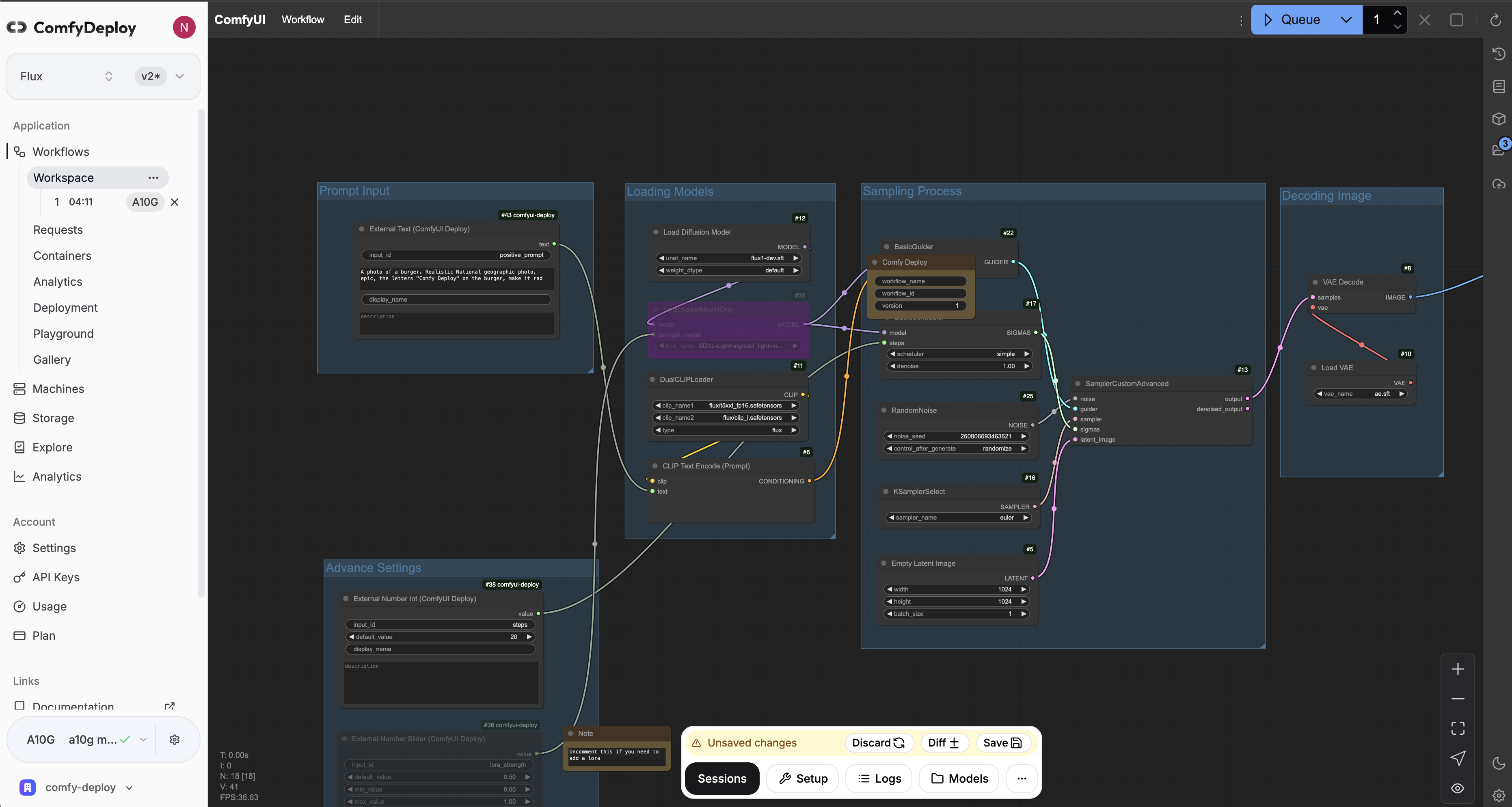

ComfyDeploy – A way for teams to use ComfyUI and power apps

https://www.comfydeploy.com/docs/v2/introduction

1 – Import your workflow

2 – Build a machine configuration to run your workflows on

3 – Download models into your private storage, to be used in your workflows and team.

4 – Run ComfyUI in the cloud to modify and test your workflows on cloud GPUs

5 – Expose workflow inputs with our custom nodes, for API and playground use

6 – Deploy APIs

7 – Let your team use your workflows in playground without using ComfyUI

-

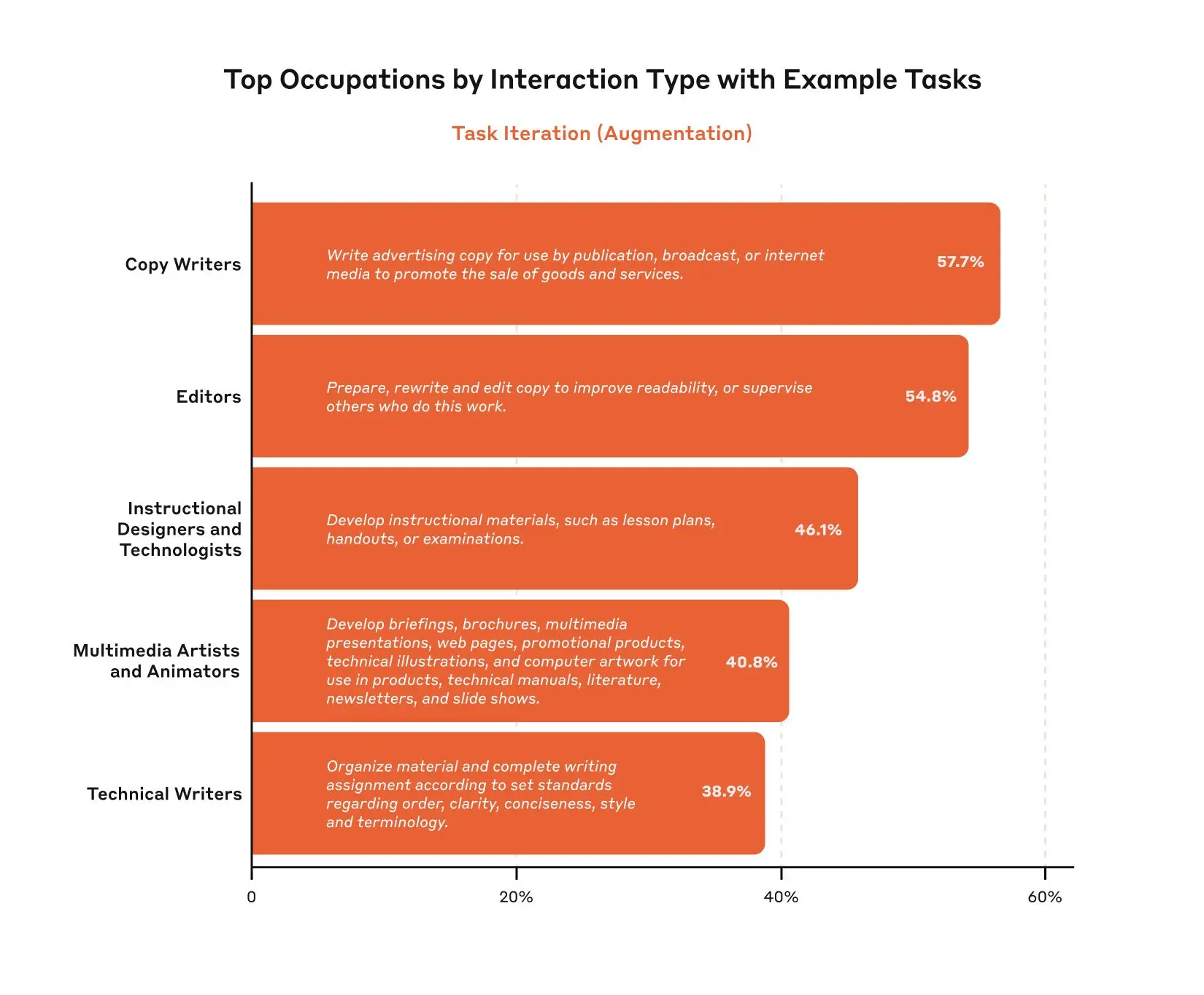

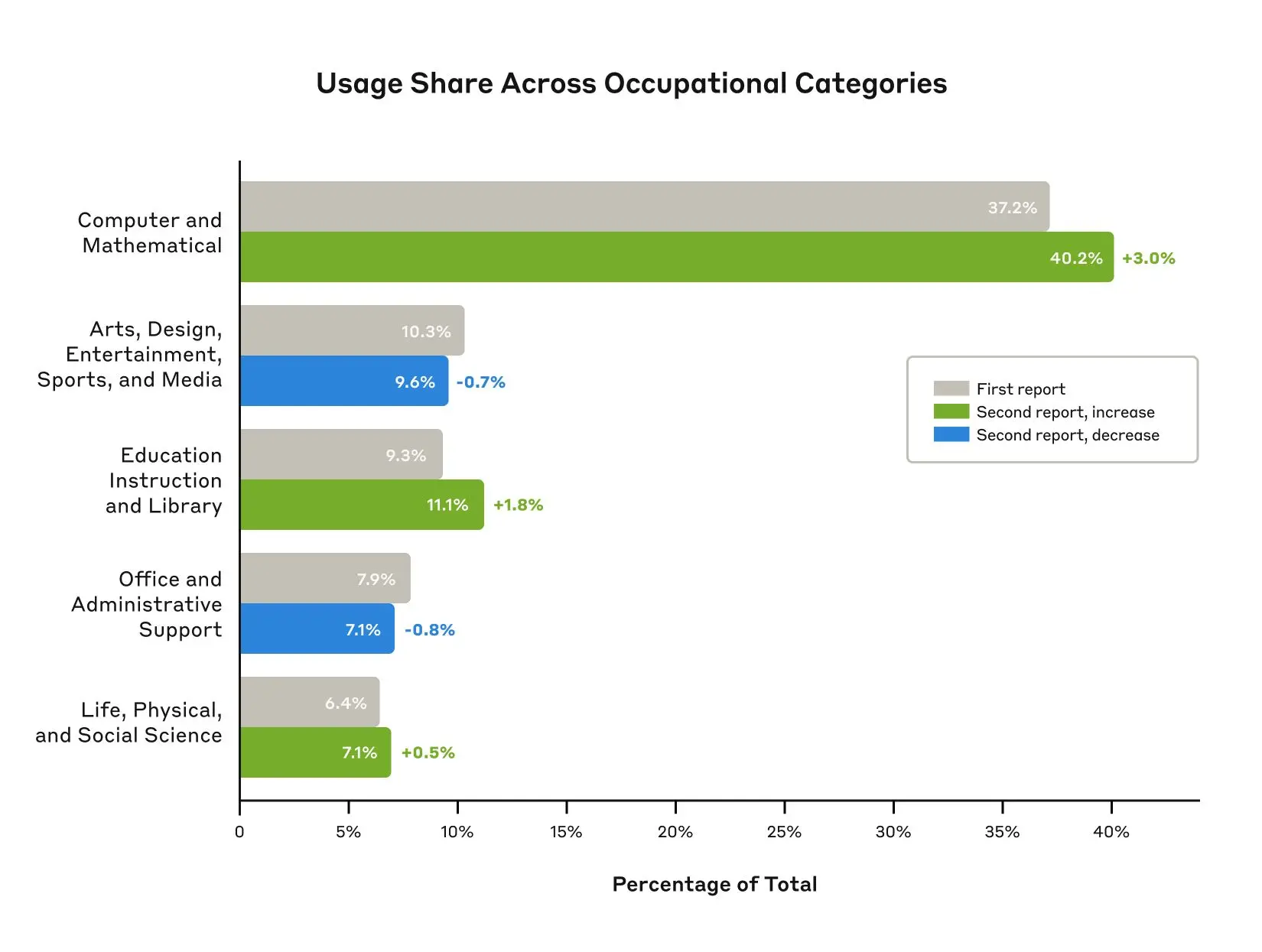

Anthropic Economic Index – Insights from Claude 3.7 Sonnet on AI future prediction

https://www.anthropic.com/news/anthropic-economic-index-insights-from-claude-sonnet-3-7

As models continue to advance, so too must our measurement of their economic impacts. In our second report, covering data since the launch of Claude 3.7 Sonnet, we find relatively modest increases in coding, education, and scientific use cases, and no change in the balance of augmentation and automation. We find that Claude’s new extended thinking mode is used with the highest frequency in technical domains and tasks, and identify patterns in automation / augmentation patterns across tasks and occupations. We release datasets for both of these analyses.

-

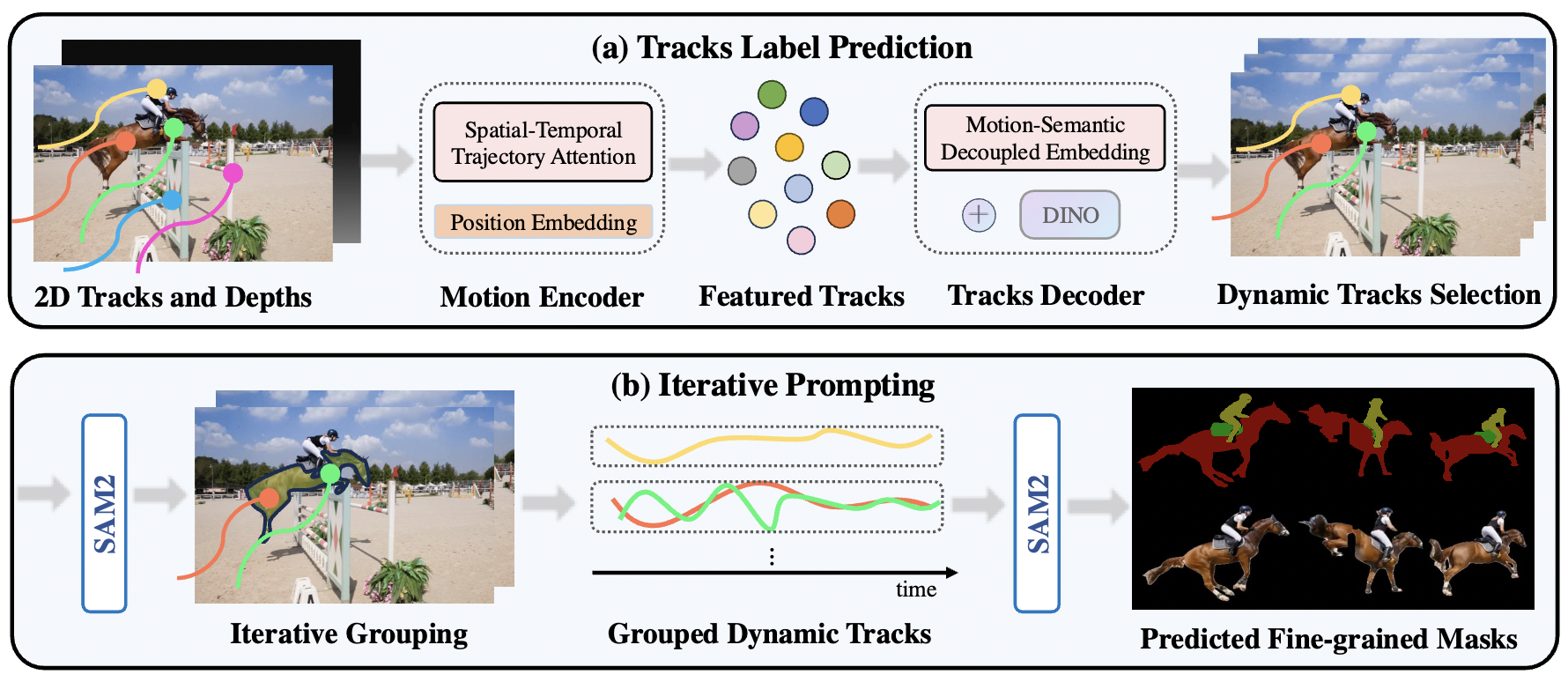

Segment Any Motion in Videos

https://github.com/nnanhuang/SegAnyMo

Overview of Our Pipeline. We take 2D tracks and depth maps generated by off-the-shelf models as input, which are then processed by a motion encoder to capture motion patterns, producing featured tracks. Next, we use tracks decoder that integrates DINO feature to decode the featured tracks by decoupling motion and semantic information and ultimately obtain the dynamic trajectories(a). Finally, using SAM2, we group dynamic tracks belonging to the same object and generate fine-grained moving object masks(b).

-

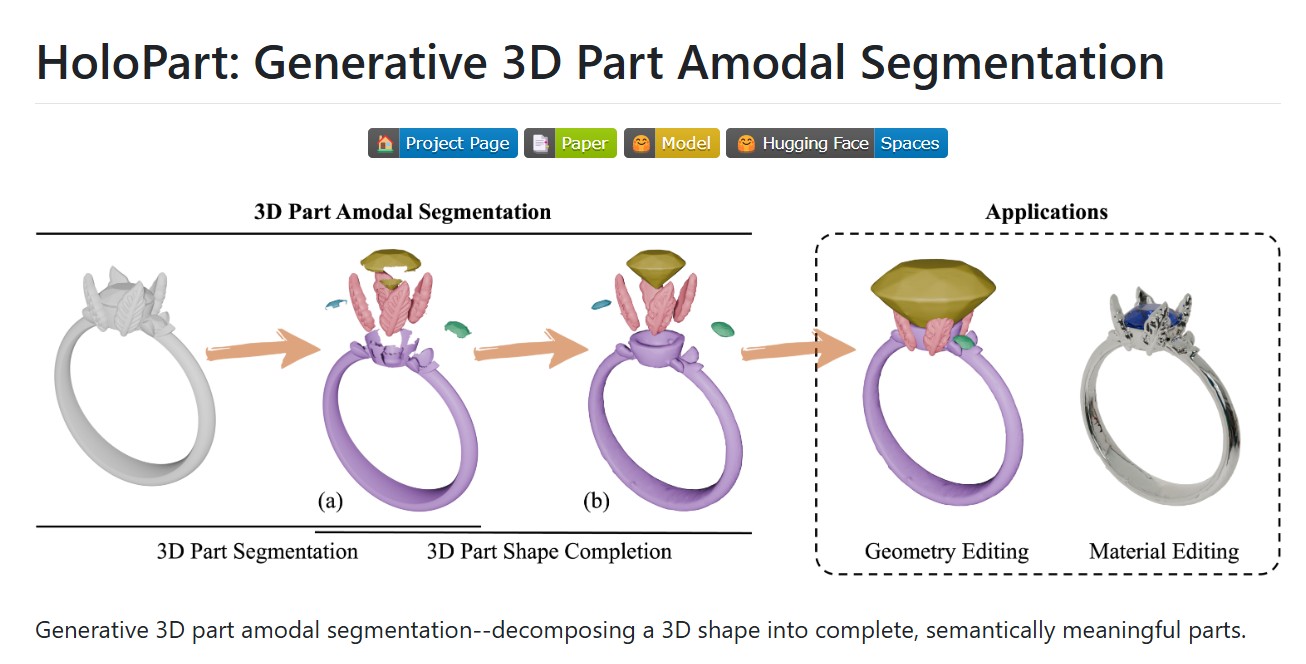

HoloPart -Generative 3D Models Part Amodal Segmentation

https://vast-ai-research.github.io/HoloPart

https://huggingface.co/VAST-AI/HoloPart

https://github.com/VAST-AI-Research/HoloPart

Applications:

– 3d printing segmentation

– texturing segmentation

– animation segmentation

– modeling segmentation

-

The Diary Of A CEO – A talk with Dr. Bessel Van Der Kolk described as “perhaps the most influential psychiatrist of the 21st century”

– Why traumatic memories are not like normal memories?

– What it was like working in a mental asylum.

– Does childhood trauma impact us permanently?

– Can yoga reverse deep past trauma?

FEATURED POSTS

-

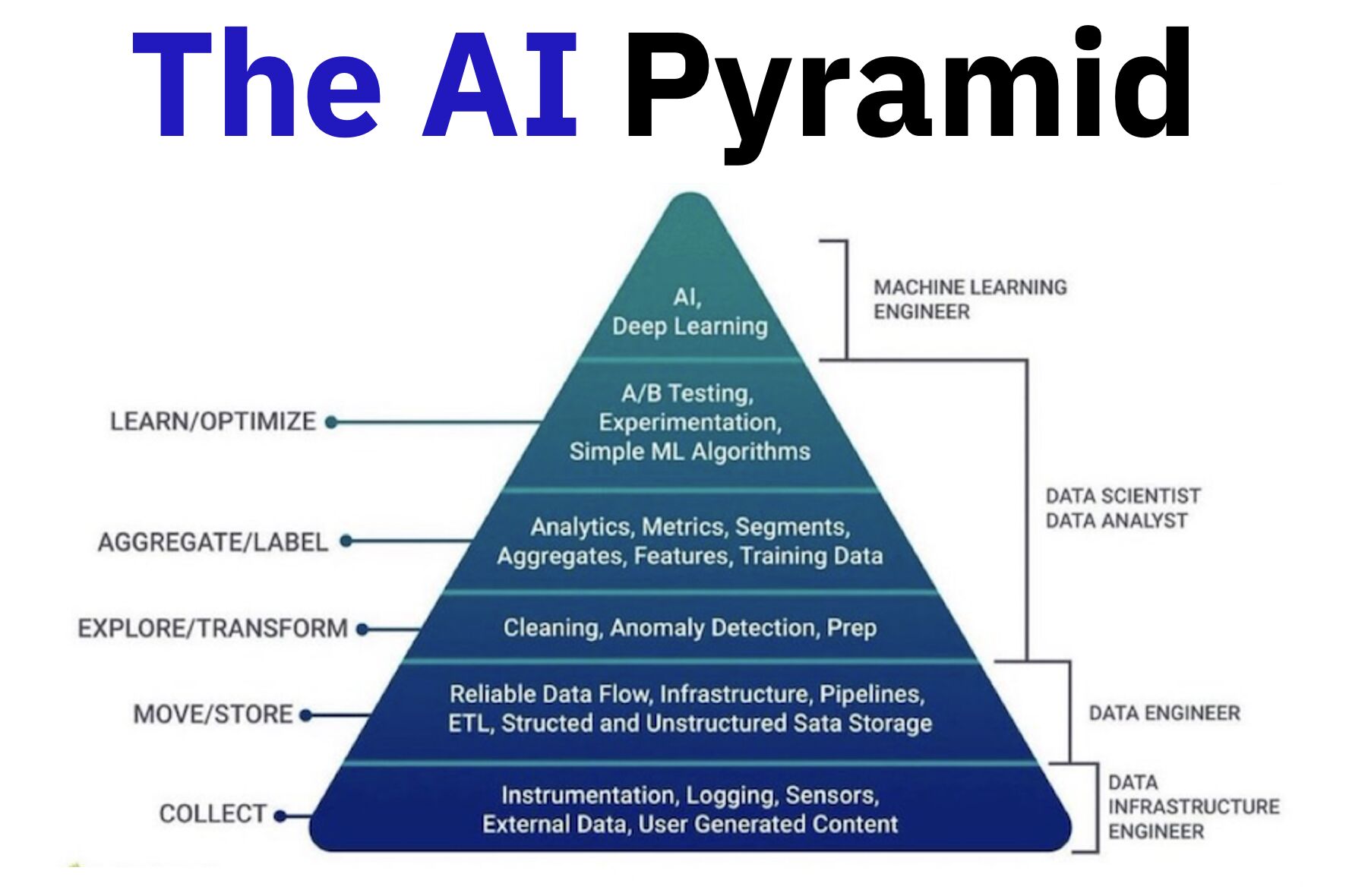

Andreas Horn – Want cutting edge AI?

𝗧𝗵𝗲 𝗯𝘂𝗶𝗹𝗱𝗶𝗻𝗴 𝗯𝗹𝗼𝗰𝗸𝘀 𝗼𝗳 𝗔𝗜 𝗮𝗻𝗱 𝗲𝘀𝘀𝗲𝗻𝘁𝗶𝗮𝗹 𝗽𝗿𝗼𝗰𝗲𝘀𝘀𝗲𝘀:

– Collect: Data from sensors, logs, and user input.

– Move/Store: Build infrastructure, pipelines, and reliable data flow.

– Explore/Transform: Clean, prep, and detect anomalies to make the data usable.

– Aggregate/Label: Add analytics, metrics, and labels to create training data.

– Learn/Optimize: Experiment, test, and train AI models.𝗧𝗵𝗲 𝗹𝗮𝘆𝗲𝗿𝘀 𝗼𝗳 𝗱𝗮𝘁𝗮 𝗮𝗻𝗱 𝗵𝗼𝘄 𝘁𝗵𝗲𝘆 𝗯𝗲𝗰𝗼𝗺𝗲 𝗶𝗻𝘁𝗲𝗹𝗹𝗶𝗴𝗲𝗻𝘁:

– Instrumentation and logging: Sensors, logs, and external data capture the raw inputs.

– Data flow and storage: Pipelines and infrastructure ensure smooth movement and reliable storage.

– Exploration and transformation: Data is cleaned, prepped, and anomalies are detected.

– Aggregation and labeling: Analytics, metrics, and labels create structured, usable datasets.

– Experimenting/AI/ML: Models are trained and optimized using the prepared data.

– AI insights and actions: Advanced AI generates predictions, insights, and decisions at the top.𝗪𝗵𝗼 𝗺𝗮𝗸𝗲𝘀 𝗶𝘁 𝗵𝗮𝗽𝗽𝗲𝗻 𝗮𝗻𝗱 𝗸𝗲𝘆 𝗿𝗼𝗹𝗲𝘀:

– Data Infrastructure Engineers: Build the foundation — collect, move, and store data.

– Data Engineers: Prep and transform the data into usable formats.

– Data Analysts & Scientists: Aggregate, label, and generate insights.

– Machine Learning Engineers: Optimize and deploy AI models.𝗧𝗵𝗲 𝗺𝗮𝗴𝗶𝗰 𝗼𝗳 𝗔𝗜 𝗶𝘀 𝗶𝗻 𝗵𝗼𝘄 𝘁𝗵𝗲𝘀𝗲 𝗹𝗮𝘆𝗲𝗿𝘀 𝗮𝗻𝗱 𝗿𝗼𝗹𝗲𝘀 𝘄𝗼𝗿𝗸 𝘁𝗼𝗴𝗲𝘁𝗵𝗲𝗿. 𝗧𝗵𝗲 𝘀𝘁𝗿𝗼𝗻𝗴𝗲𝗿 𝘆𝗼𝘂𝗿 𝗳𝗼𝘂𝗻𝗱𝗮𝘁𝗶𝗼𝗻, 𝘁𝗵𝗲 𝘀𝗺𝗮𝗿𝘁𝗲𝗿 𝘆𝗼𝘂𝗿 𝗔𝗜.

-

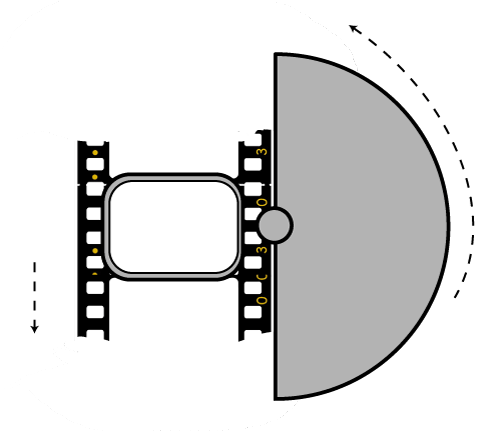

Photography basics: Shutter angle and shutter speed and motion blur

http://www.shutterangle.com/2012/cinematic-look-frame-rate-shutter-speed/

https://www.cinema5d.com/global-vs-rolling-shutter

https://www.wikihow.com/Choose-a-Camera-Shutter-Speed

Shutter is the device that controls the amount of light through a lens. Basically in general it controls the amount of time a film is exposed.

Shutter speed is how long this device is open for, which also defines motion blur… the longer it stays open the blurrier the image captured.

The number refers to the amount of light actually allowed through.As a reference, shooting at 24fps, at 180 shutter angle or 1/48th of shutter speed (0.0208 exposure time) will produce motion blur which is similar to what we perceive at naked eye

Talked of as in (shutter) angles, for historical reasons, as the original exposure mechanism was controlled through a pie shaped mirror in front of the lens.

A shutter of 180 degrees is blocking/allowing light for half circle. (half blocked, half open). 270 degrees is one quarter pie shaped, which would allow for a higher exposure time (3 quarter pie open, vs one quarter closed) 90 degrees is three quarter pie shaped, which would allow for a lower exposure (one quarter open, three quarters closed)

(more…)

-

STOP FCC – SAVE THE FREE NET

Help saving free sites like this one.

The FCC voted to kill net neutrality and let ISPs like Comcast ruin the web with throttling, censorship, and new fees. Congress has 60 legislative days to overrule them and save the Internet using the Congressional Review Act

https://www.battleforthenet.com/http://mashable.com/2012/01/17/sopa-dangerous-opinion/

-

Björn Ottosson – How software gets color wrong

https://bottosson.github.io/posts/colorwrong/

Most software around us today are decent at accurately displaying colors. Processing of colors is another story unfortunately, and is often done badly.

To understand what the problem is, let’s start with an example of three ways of blending green and magenta:

- Perceptual blend – A smooth transition using a model designed to mimic human perception of color. The blending is done so that the perceived brightness and color varies smoothly and evenly.

- Linear blend – A model for blending color based on how light behaves physically. This type of blending can occur in many ways naturally, for example when colors are blended together by focus blur in a camera or when viewing a pattern of two colors at a distance.

- sRGB blend – This is how colors would normally be blended in computer software, using sRGB to represent the colors.

Let’s look at some more examples of blending of colors, to see how these problems surface more practically. The examples use strong colors since then the differences are more pronounced. This is using the same three ways of blending colors as the first example.

Instead of making it as easy as possible to work with color, most software make it unnecessarily hard, by doing image processing with representations not designed for it. Approximating the physical behavior of light with linear RGB models is one easy thing to do, but more work is needed to create image representations tailored for image processing and human perception.

Also see: