BREAKING NEWS

LATEST POSTS

-

Tencent Hunyuan AI Video – A Systematic Framework For Large Video Generation Model

https://aivideo.hunyuan.tencent.com

https://github.com/Tencent/HunyuanVideo

Unlike other models like Sora, Pika2, Veo2, HunyuanVideo’s neural network weights are uncensored and openly distributed, which means they can be run locally under the right circumstances (for example on a consumer 24 GB VRAM GPU) and it can be fine-tuned or used with LoRAs to teach it new concepts.

-

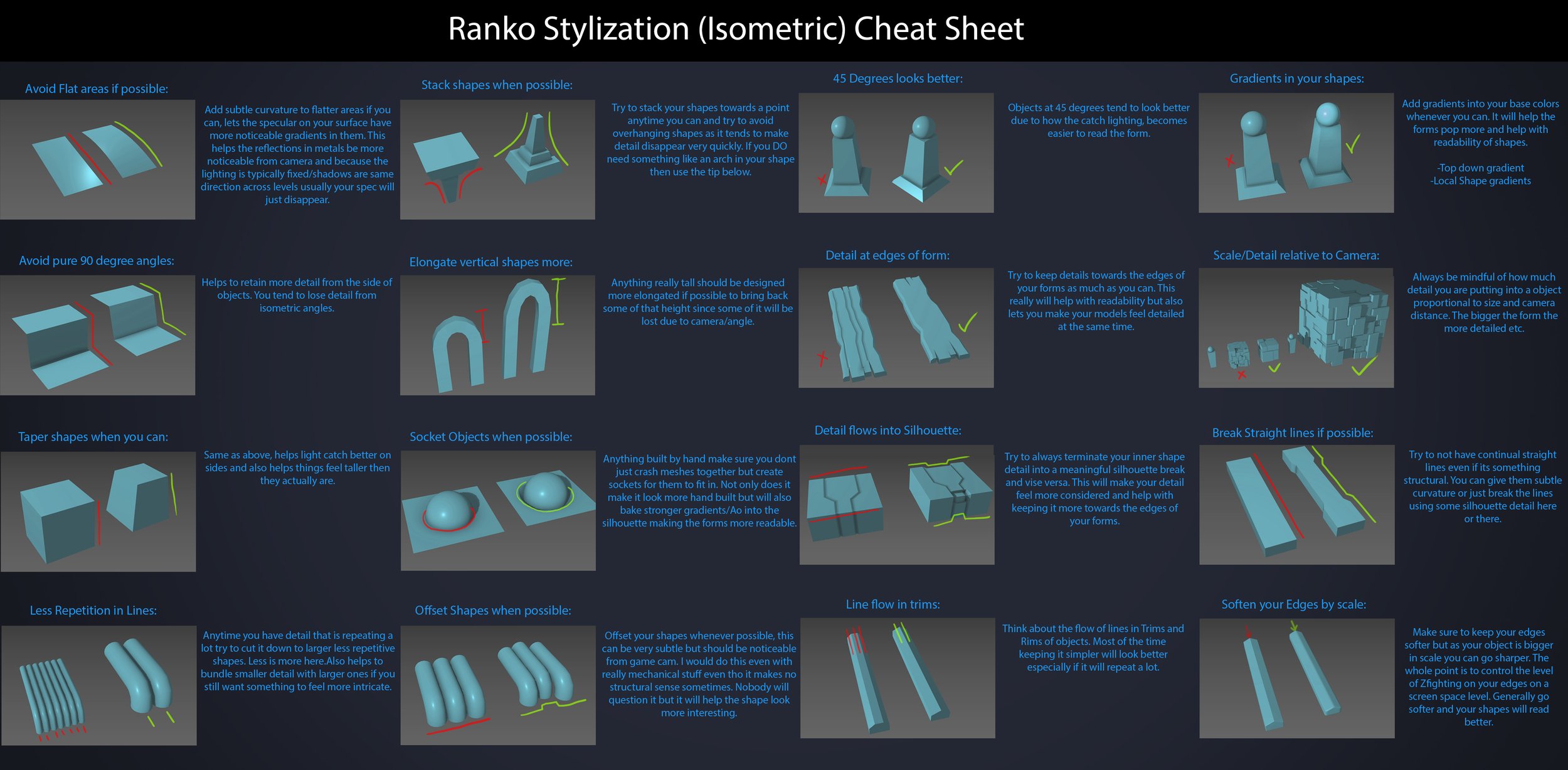

Ranko Prozo – Modelling design tips

Every Project I work on I always create a stylization Cheat sheet. Every project is unique but some principles carry over no matter what. This is a sheet I use a lot when I work on isometric stylized projects to help keep my assets consistent and interesting. None of these concepts are my own, just lots of tips I learned over the years. I have also added this to a page on my website, will continue to update with more tips and tricks, just need time to compile it all :)

-

Wyz Borrero – AI-generated “casting”

Guillermo del Toro and Ben Affleck, among others, have voiced concerns about the capabilities of generative AI in the creative industries. They believe that while AI can produce text, images, sound, and video that are technically proficient, it lacks the authentic emotional depth and creative intuition inherent in human artistry—qualities that define works like those of Shakespeare, Dalí, or Hitchcock.

Generative AI models are trained on vast datasets and excel at recognizing and replicating patterns. They can generate coherent narratives, mimic writing or artistic styles, and even compose poetry and music. However, they do not possess consciousness or genuine emotions. The “emotion” conveyed in AI-generated content is a reflection of learned patterns rather than true emotional experience.

Having extensively tested and used generative AI over the past four years, I observe that the rapid advancement of the field suggests many current limitations could be overcome in the future. As models become more sophisticated and training data expands, AI systems are increasingly capable of generating content that is coherent, contextually relevant, stylistically diverse, and can even evoke emotional responses.

The following video is an AI-generated “casting” using a text-to-video model specifically prompted to test emotion, expressions, and microexpressions. This is only the beginning.

FEATURED POSTS

-

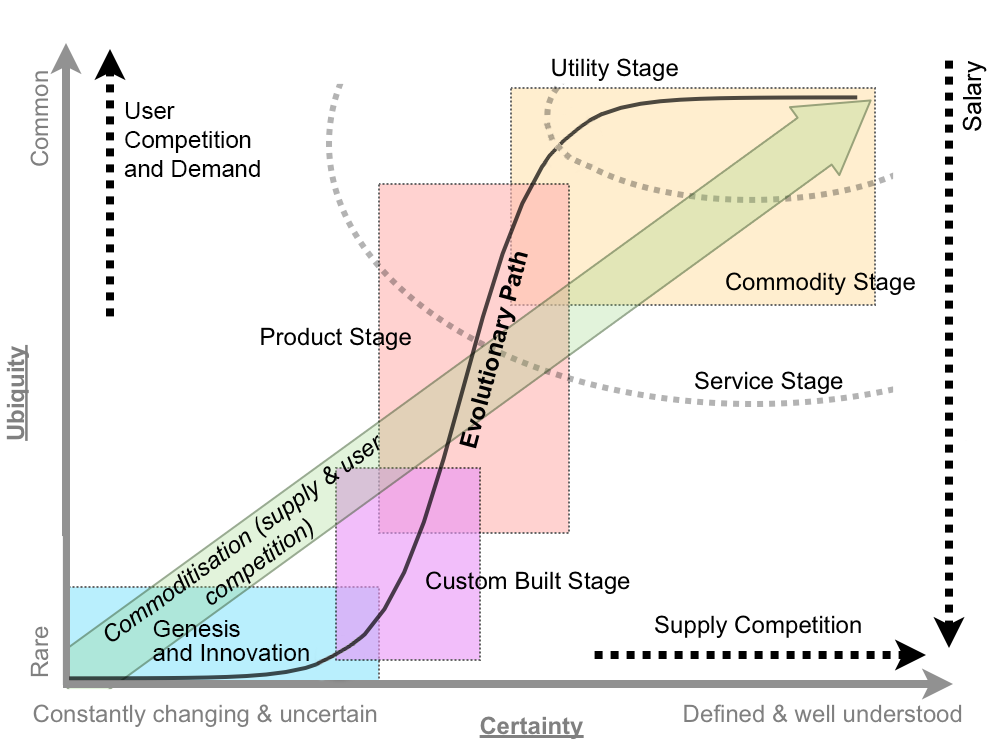

What the Boeing 737 MAX’s crashes can teach us about production business – the effects of commoditisation

Airplane manufacturing is no different from mortgage lending or insulin distribution or make-believe blood analyzing software (or VFX?) —another cash cow for the one percent, bound inexorably for the slaughterhouse.

The beginning of the end was “Boeing’s 1997 acquisition of McDonnell Douglas, a dysfunctional firm with a dilapidated aircraft plant in Long Beach and a CEO (Harry Stonecipher) who liked to use what he called the “Hollywood model” for dealing with engineers: Hire them for a few months when project deadlines are nigh, fire them when you need to make numbers.” And all that came with it. “Stonecipher’s team had driven the last nail in the coffin of McDonnell’s flailing commercial jet business by trying to outsource everything but design, final assembly, and flight testing and sales.”

It is understood, now more than ever, that capitalism does half-assed things like that, especially in concert with computer software and oblivious regulators.

There was something unsettlingly familiar when the world first learned of MCAS in November, about two weeks after the system’s unthinkable stupidity drove the two-month-old plane and all 189 people on it to a horrific death. It smacked of the sort of screwup a 23-year-old intern might have made—and indeed, much of the software on the MAX had been engineered by recent grads of Indian software-coding academies making as little as $9 an hour, part of Boeing management’s endless war on the unions that once represented more than half its employees.

Down in South Carolina, a nonunion Boeing assembly line that opened in 2011 had for years churned out scores of whistle-blower complaints and wrongful termination lawsuits packed with scenes wherein quality-control documents were regularly forged, employees who enforced standards were sabotaged, and planes were routinely delivered to airlines with loose screws, scratched windows, and random debris everywhere.

Shockingly, another piece of the quality failure is Boeing securing investments from all airliners, starting with SouthWest above all, to guarantee Boeing’s production lines support in exchange for fair market prices and favorite treatments. Basically giving Boeing financial stability independently on the quality of their product. “Those partnerships were but one numbers-smoothing mechanism in a diversified tool kit Boeing had assembled over the previous generation for making its complex and volatile business more palatable to Wall Street.”

-

Embedding frame ranges into Quicktime movies with FFmpeg

QuickTime (.mov) files are fundamentally time-based, not frame-based, and so don’t have a built-in, uniform “first frame/last frame” field you can set as numeric frame IDs. Instead, tools like Shotgun Create rely on the timecode track and the movie’s duration to infer frame numbers. If you want Shotgun to pick up a non-default frame range (e.g. start at 1001, end at 1064), you must bake in an SMPTE timecode that corresponds to your desired start frame, and ensure the movie’s duration matches your clip length.

How Shotgun Reads Frame Ranges

- Default start frame is 1. If no timecode metadata is present, Shotgun assumes the movie begins at frame 1.

- Timecode ⇒ frame number. Shotgun Create “honors the timecodes of media sources,” mapping the embedded TC to frame IDs. For example, a 24 fps QuickTime tagged with a start timecode of 00:00:41:17 will be interpreted as beginning on frame 1001 (1001 ÷ 24 fps ≈ 41.71 s).

Embedding a Start Timecode

QuickTime uses a

tmcd(timecode) track. You can bake in an SMPTE track via FFmpeg’s-timecodeflag or via Compressor/encoder settings:- Compute your start TC.

- Desired start frame = 1001

- Frame 1001 at 24 fps ⇒ 1001 ÷ 24 ≈ 41.708 s ⇒ TC 00:00:41:17

- FFmpeg example:

ffmpeg -i input.mov \ -c copy \ -timecode 00:00:41:17 \ output.movThis adds a timecode track beginning at 00:00:41:17, which Shotgun maps to frame 1001.

Ensuring the Correct End Frame

Shotgun infers the last frame from the movie’s duration. To end on frame 1064:

- Frame count = 1064 – 1001 + 1 = 64 frames

- Duration = 64 ÷ 24 fps ≈ 2.667 s

FFmpeg trim example:

ffmpeg -i input.mov \ -c copy \ -timecode 00:00:41:17 \ -t 00:00:02.667 \ output_trimmed.movThis results in a 64-frame clip (1001→1064) at 24 fps.