BREAKING NEWS

LATEST POSTS

-

Why Streaming Content Could Be Hollywood’s Final Act

https://www.forbes.com/sites/carolinereid/2024/10/24/why-streaming-could-be-hollywoods-final-act/

The future of Hollywood was reshaped in 1997 with the founding of Netflix, an innovative mail-order DVD rental business by Reed Hastings and Marc Randolph. Unlike traditional rentals, Netflix allowed subscribers to retain DVDs as long as they wanted but required returns before ordering more, allowing the company to collect uninterrupted subscription fees. By 2009, Netflix was shipping nearly a billion DVDs annually but had already set its sights on streaming. The transition to streaming, launched in 2007, faced initial challenges due to limited broadband availability but soon became popular, outpacing the DVD business and bringing Netflix millions of subscribers.

Netflix’s dominance drove traditional media giants to reevaluate their strategies. Disney, initially hesitant, eventually licensed its vast library to Netflix, contributing to the latter’s rise. However, by 2017, Disney pivoted to launch its own platform, Disney+, breaking its Netflix partnership and acquiring 21st Century Fox for content diversification. Disney’s decision sparked a broader industry shift as other studios also developed streaming services, aiming to retain full revenue from direct-to-consumer content instead of sharing it with theaters or traditional networks.

Disney+ quickly gained traction, especially during the pandemic, reaching millions of subscribers and temporarily boosting Disney’s stock. However, the reliance on streaming and subscriber growth strained Disney financially, with high operating costs and content expenses. Content exclusivity backfired, creating complexity for fans, particularly with interconnected Marvel shows, and contributing to user dissatisfaction. Additionally, Disney’s decision to release films like Black Widow simultaneously in theaters and on streaming led to backlash, lawsuits, and lost box office revenue, highlighting the downsides of simultaneous releases.

Facing ballooning expenses and subscriber attrition post-pandemic, Disney’s leadership returned to more traditional revenue models, emphasizing exclusive theater releases and licensing content to third parties. They also introduced cost-saving measures like job cuts and content reductions to stabilize financial losses. This shift echoes a partial return to pre-streaming industry norms as Disney and other studios explore “always-on” channels within their streaming platforms, aiming to balance direct consumer access with sustainable profit models.

-

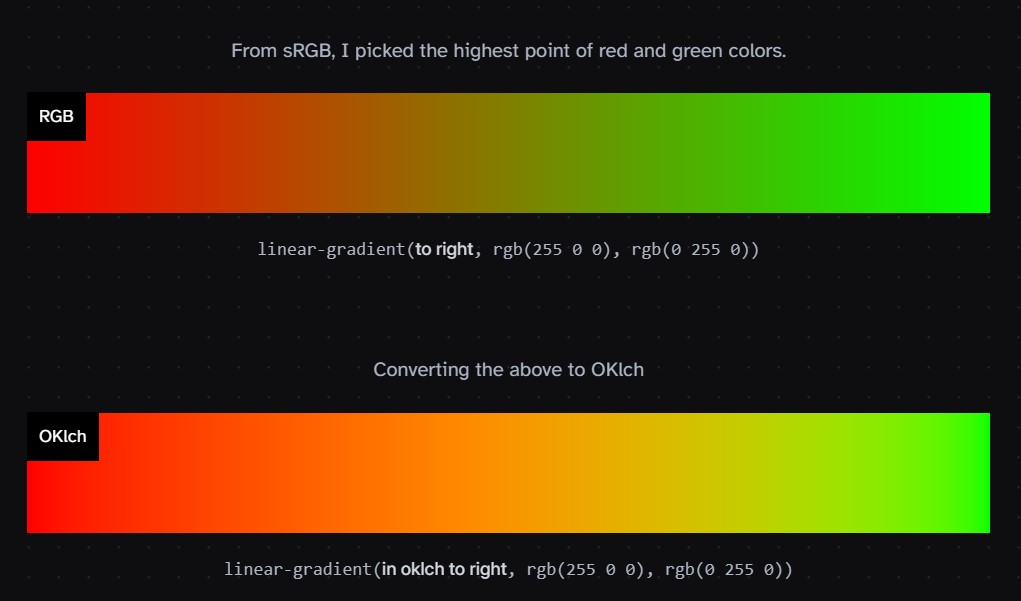

Björn Ottosson – OKlch color space

Björn Ottosson proposed OKlch in 2020 to create a color space that can closely mimic how color is perceived by the human eye, predicting perceived lightness, chroma, and hue.

The OK in OKLCH stands for Optimal Color.

- L: Lightness (the perceived brightness of the color)

- C: Chroma (the intensity or saturation of the color)

- H: Hue (the actual color, such as red, blue, green, etc.)

Also read:

-

Motionity – The free, open source web-based motion graphics editor for everyone

https://www.producthunt.com/products/motionity

Motionity is an free and open source animation editor in the web. It’s a mix of After Effects and Canva, with powerful features like keyframing, masking, filters, and more, and integrations to browse for assets to easily drag and drop into your video.

-

Open Shading Language (OSL) by Larry Gritz

Open Shading Language (OSL) is a small but rich language for programmable shading in advanced renderers and other applications, ideal for describing materials, lights, displacement, and pattern generation.

https://open-shading-language.readthedocs.io/en/main/

https://github.com/AcademySoftwareFoundation/OpenShadingLanguage

https://github.com/sambler/osl-shaders

Learn OSL in a few minutes

https://learnxinyminutes.com/docs/osl/

FEATURED POSTS

-

Glenn Marshall – The Crow

Created with AI ‘Style Transfer’ processes to transform video footage into AI video art.

Video Player00:0000:00

-

Photography basics: Solid Angle measures

http://www.calculator.org/property.aspx?name=solid+angle

A measure of how large the object appears to an observer looking from that point. Thus. A measure for objects in the sky. Useful to retuen the size of the sun and moon… and in perspective, how much of their contribution to lighting. Solid angle can be represented in ‘angular diameter’ as well.

http://en.wikipedia.org/wiki/Solid_angle

http://www.mathsisfun.com/geometry/steradian.html

A solid angle is expressed in a dimensionless unit called a steradian (symbol: sr). By default in terms of the total celestial sphere and before atmospheric’s scattering, the Sun and the Moon subtend fractional areas of 0.000546% (Sun) and 0.000531% (Moon).

http://en.wikipedia.org/wiki/Solid_angle#Sun_and_Moon

On earth the sun is likely closer to 0.00011 solid angle after athmospheric scattering. The sun as perceived from earth has a diameter of 0.53 degrees. This is about 0.000064 solid angle.

http://www.numericana.com/answer/angles.htm

The mean angular diameter of the full moon is 2q = 0.52° (it varies with time around that average, by about 0.009°). This translates into a solid angle of 0.0000647 sr, which means that the whole night sky covers a solid angle roughly one hundred thousand times greater than the full moon.

More info

http://lcogt.net/spacebook/using-angles-describe-positions-and-apparent-sizes-objects

http://amazing-space.stsci.edu/glossary/def.php.s=topic_astronomy

Angular Size

The apparent size of an object as seen by an observer; expressed in units of degrees (of arc), arc minutes, or arc seconds. The moon, as viewed from the Earth, has an angular diameter of one-half a degree.

The angle covered by the diameter of the full moon is about 31 arcmin or 1/2°, so astronomers would say the Moon’s angular diameter is 31 arcmin, or the Moon subtends an angle of 31 arcmin.