BREAKING NEWS

LATEST POSTS

-

HumanDiT – Pose-Guided Diffusion Transformer for Long-form Human Motion Video Generation

https://agnjason.github.io/HumanDiT-page

By inputting a single character image and template pose video, our method can generate vocal avatar videos featuring not only pose-accurate rendering but also realistic body shapes.

-

DynVFX – Augmenting Real Videoswith Dynamic Content

Given an input video and a simple user-provided text instruction describing the desired content, our method synthesizes dynamic objects or complex scene effects that naturally interact with the existing scene over time. The position, appearance, and motion of the new content are seamlessly integrated into the original footage while accounting for camera motion, occlusions, and interactions with other dynamic objects in the scene, resulting in a cohesive and realistic output video.

https://dynvfx.github.io/sm/index.html

-

ByteDance OmniHuman-1

https://omnihuman-lab.github.io

They propose an end-to-end multimodality-conditioned human video generation framework named OmniHuman, which can generate human videos based on a single human image and motion signals (e.g., audio only, video only, or a combination of audio and video). In OmniHuman, we introduce a multimodality motion conditioning mixed training strategy, allowing the model to benefit from data scaling up of mixed conditioning. This overcomes the issue that previous end-to-end approaches faced due to the scarcity of high-quality data. OmniHuman significantly outperforms existing methods, generating extremely realistic human videos based on weak signal inputs, especially audio. It supports image inputs of any aspect ratio, whether they are portraits, half-body, or full-body images, delivering more lifelike and high-quality results across various scenarios.

-

Conda – an open source management system for installing multiple versions of software packages and their dependencies into a virtual environment

https://anaconda.org/anaconda/conda

https://docs.conda.io/projects/conda/en/latest/user-guide/getting-started.html

NOTE The company recently changed their TOS and this service now incurs into costs for teams above a threshold.

Use MicroMamba instead. -

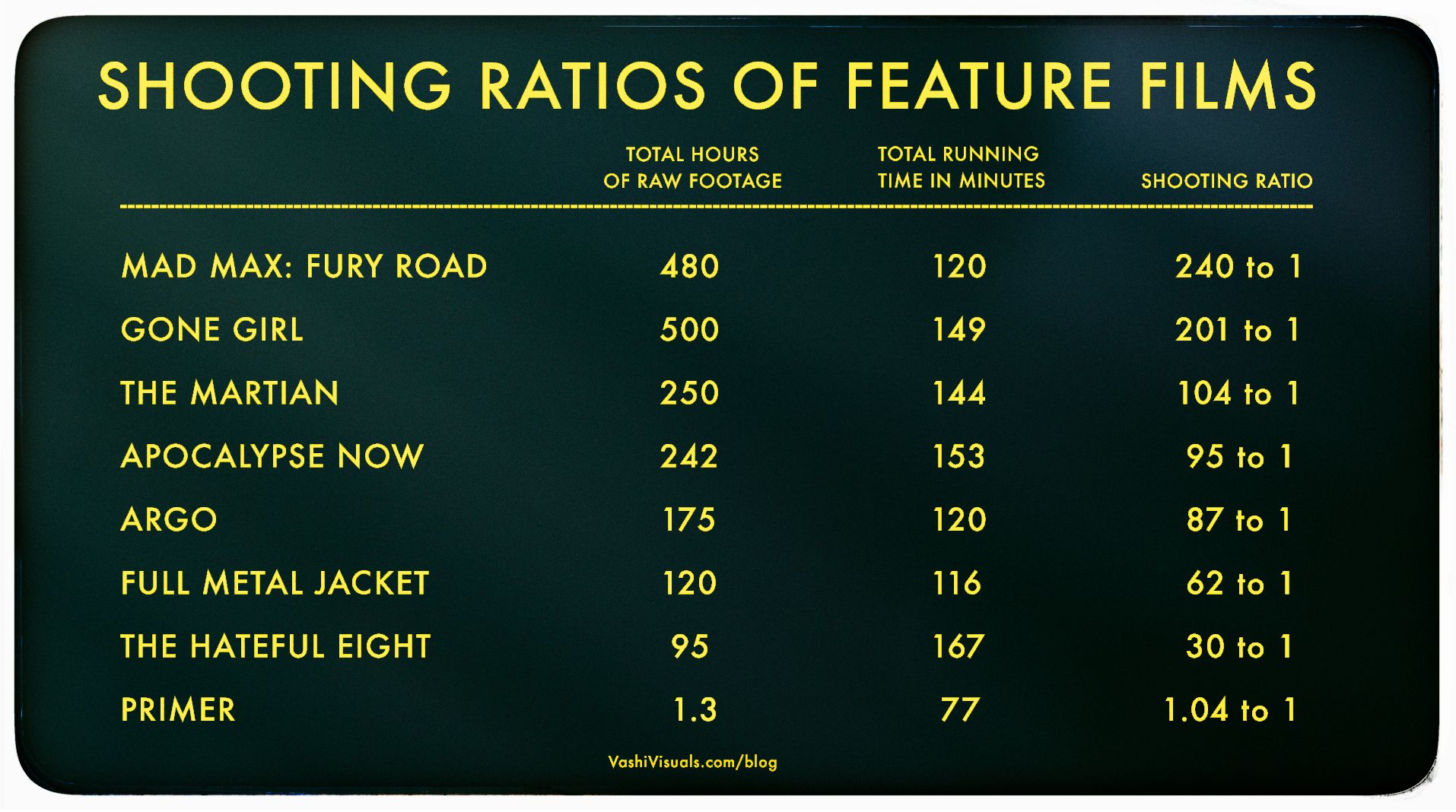

Vashi Nedomansky – Shooting ratios of feature films

In the Golden Age of Hollywood (1930-1959), a 10:1 shooting ratio was the norm—a 90-minute film meant about 15 hours of footage. Directors like Alfred Hitchcock famously kept it tight with a 3:1 ratio, giving studios little wiggle room in the edit.

Fast forward to today: the digital era has sent shooting ratios skyrocketing. Affordable cameras roll endlessly, capturing multiple takes, resets, and everything in between. Gone are the disciplined “Action to Cut” days of film.https://en.wikipedia.org/wiki/Shooting_ratio

-

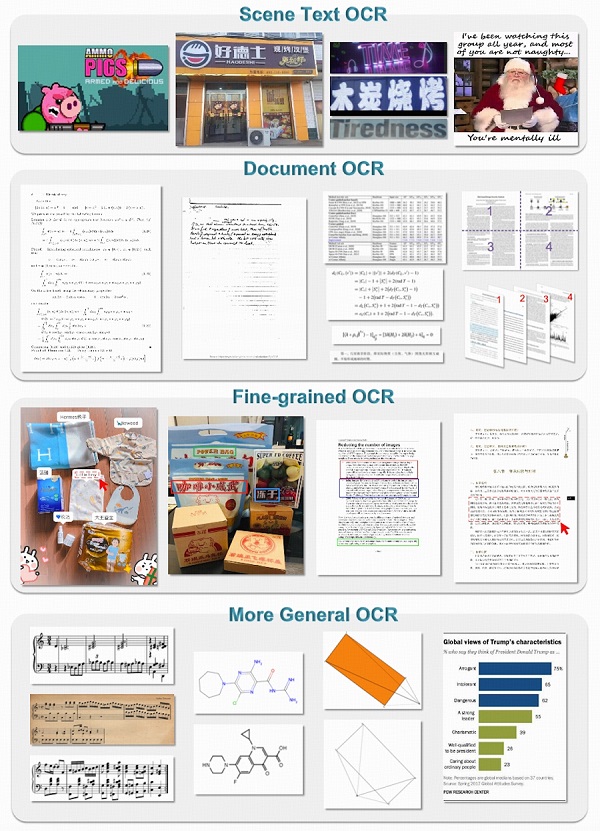

General OCR Theory – Towards OCR-2.0 via a Unified End-to-end Model – HF Transformers implementation

https://huggingface.co/stepfun-ai/GOT-OCR-2.0-hf

GOT-OCR2 works on a wide range of tasks, including plain document OCR, scene text OCR, formatted document OCR, and even OCR for tables, charts, mathematical formulas, geometric shapes, molecular formulas and sheet music.

-

QNTM – Developer Philosophy

- Avoid, at all costs, arriving at a scenario where the ground-up rewrite starts to look attractive

- Aim to be 90% done in 50% of the available time

- Automate good practice

- Think about pathological data

- There is usually a simpler way to write it

- Write code to be testable

- It is insufficient for code to be provably correct; it should be obviously, visibly, trivially correct

FEATURED POSTS

-

braindump.me – Building an AI game studio: what we’ve learned so far

https://braindump.me/blog-posts/building-an-ai-game-studio

Braindump is an attempt to imagine what game creation could be like in the brave new world of LLMs and generative AI to give you an entire AI game studio, complete with coders, artists, and so on, to help you create your dream game.

-

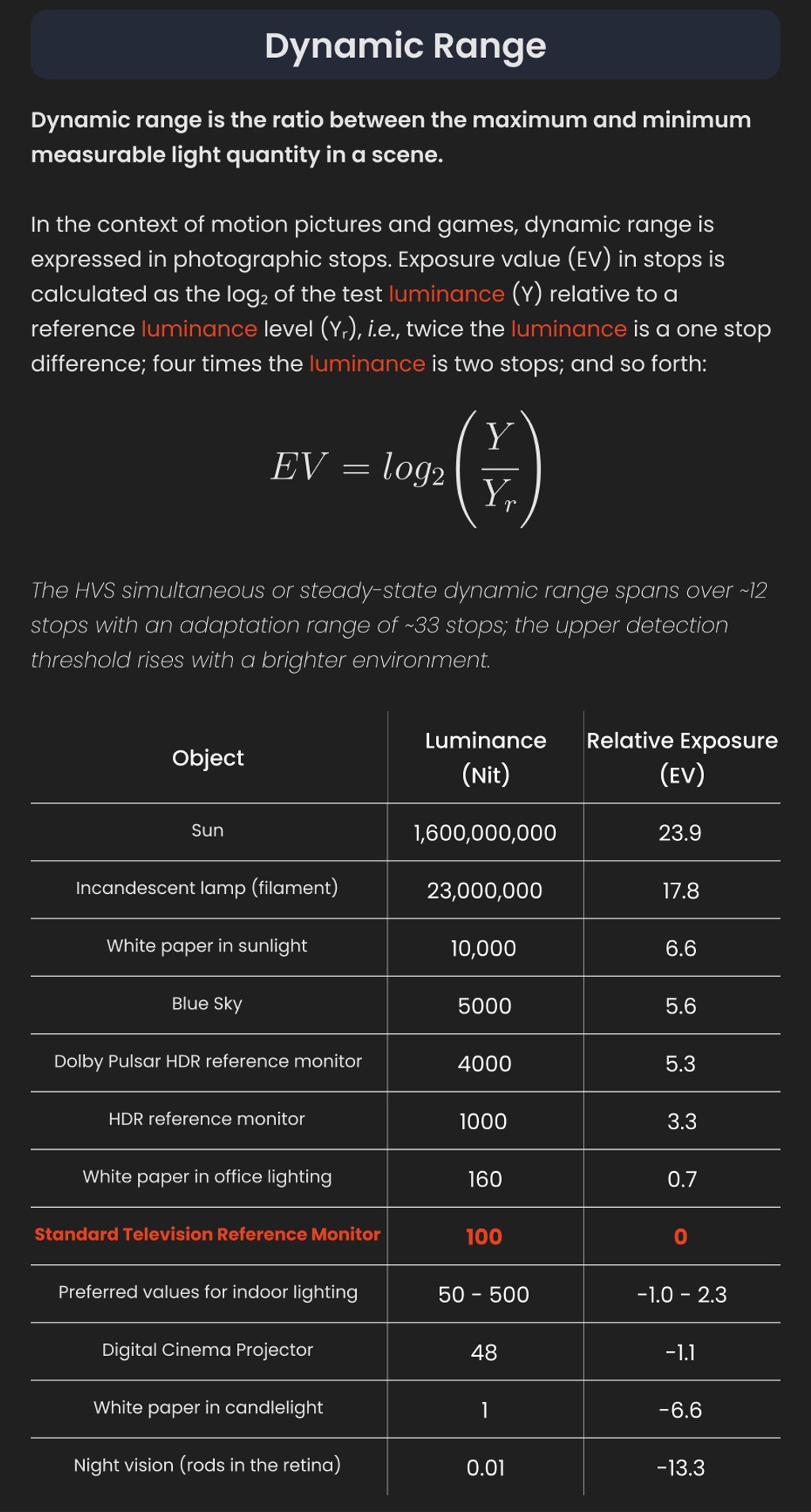

GretagMacbeth Color Checker Numeric Values and Middle Gray

The human eye perceives half scene brightness not as the linear 50% of the present energy (linear nature values) but as 18% of the overall brightness. We are biased to perceive more information in the dark and contrast areas. A Macbeth chart helps with calibrating back into a photographic capture into this “human perspective” of the world.

https://en.wikipedia.org/wiki/Middle_gray

In photography, painting, and other visual arts, middle gray or middle grey is a tone that is perceptually about halfway between black and white on a lightness scale in photography and printing, it is typically defined as 18% reflectance in visible light

Light meters, cameras, and pictures are often calibrated using an 18% gray card[4][5][6] or a color reference card such as a ColorChecker. On the assumption that 18% is similar to the average reflectance of a scene, a grey card can be used to estimate the required exposure of the film.

https://en.wikipedia.org/wiki/ColorChecker

(more…)

-

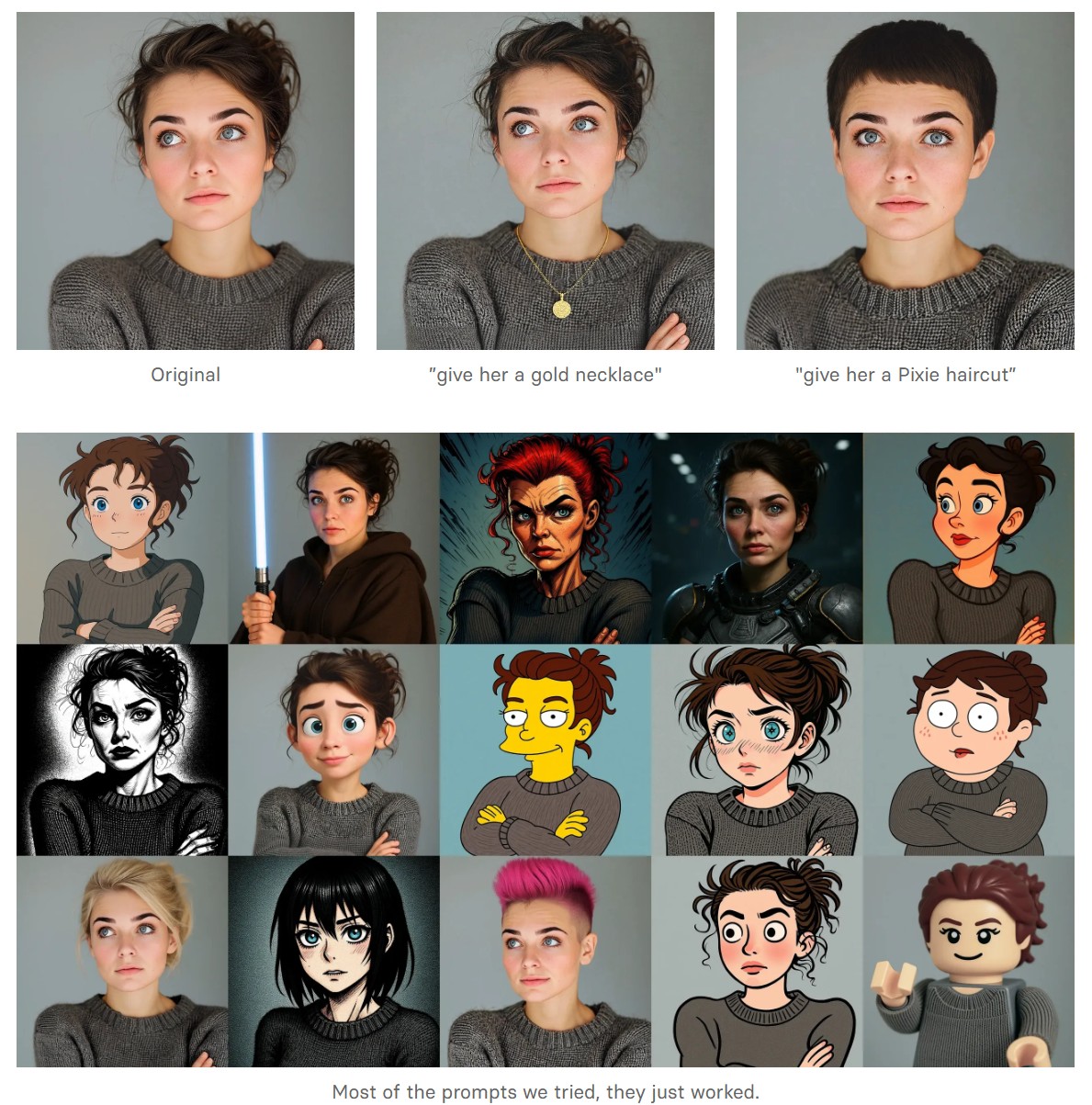

Black Forest Labs released FLUX.1 Kontext

https://replicate.com/blog/flux-kontext

https://replicate.com/black-forest-labs/flux-kontext-pro

There are three models, two are available now, and a third open-weight version is coming soon:

- FLUX.1 Kontext [pro]: State-of-the-art performance for image editing. High-quality outputs, great prompt following, and consistent results.

- FLUX.1 Kontext [max]: A premium model that brings maximum performance, improved prompt adherence, and high-quality typography generation without compromise on speed.

- Coming soon: FLUX.1 Kontext [dev]: An open-weight, guidance-distilled version of Kontext.

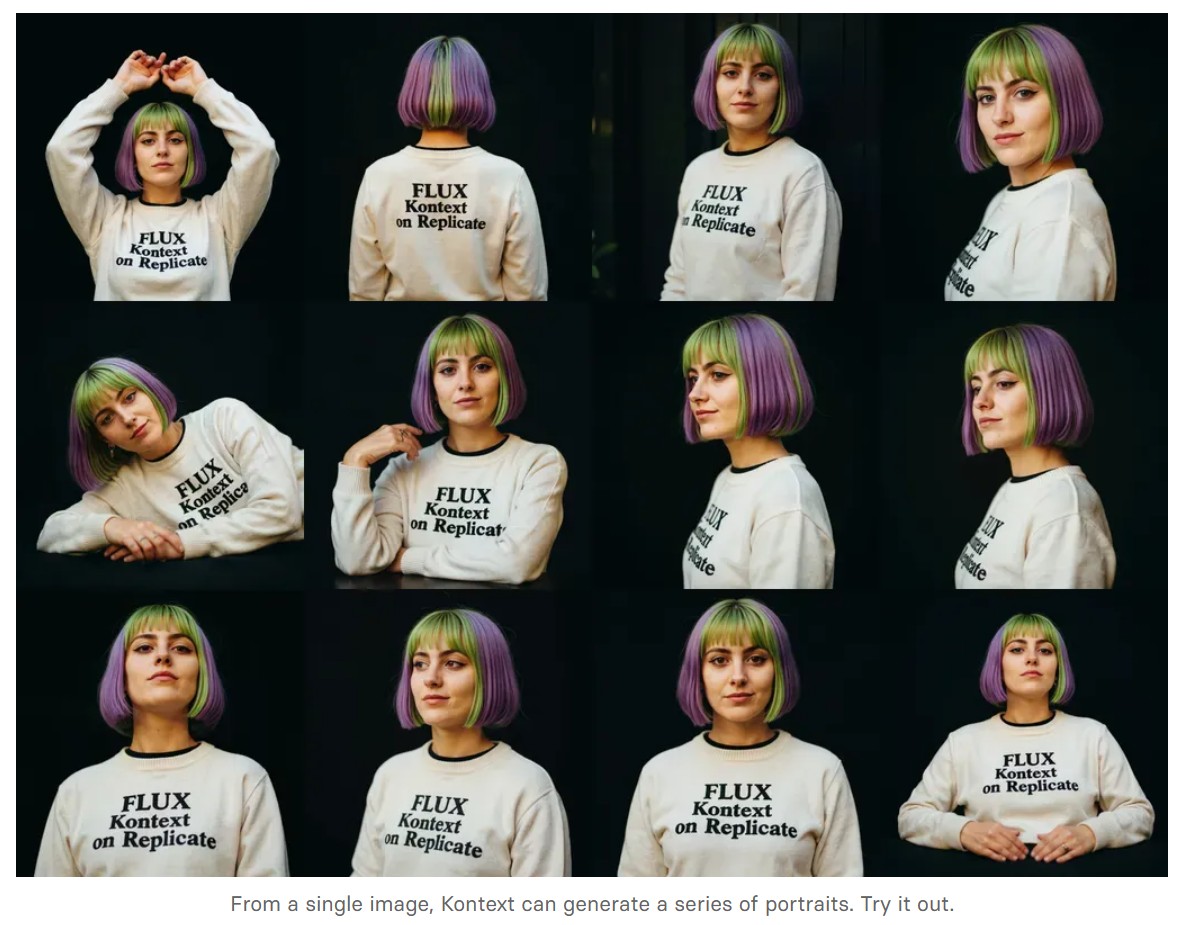

We’re so excited with what Kontext can do, we’ve created a collection of models on Replicate to give you ideas:

- Multi-image kontext: Combine two images into one.

- Portrait series: Generate a series of portraits from a single image

- Change haircut: Change a person’s hair style and color

- Iconic locations: Put yourself in front of famous landmarks

- Professional headshot: Generate a professional headshot from any image