BREAKING NEWS

LATEST POSTS

-

Meta Avat3r – Large Animatable Gaussian Reconstruction Model for High-fidelity 3D Head Avatars

https://tobias-kirschstein.github.io/avat3r

Avat3r takes 4 input images of a person’s face and generates an animatable 3D head avatar in a single forward pass. The resulting 3D head representation can be animated at interactive rates. The entire creation process of the 3D avatar, from taking 4 smartphone pictures to the final result, can be executed within minutes.

https://www.uploadvr.com/meta-researchers-generate-photorealistic-avatars-from-just-four-selfies

-

Shadow of Mordor’s brilliant Nemesis system is locked away by a Warner Bros patent until 2036, despite studio shutdown

The Nemesis system, for those unfamiliar, is a clever in-game mechanic which tracks a player’s actions to create enemies that feel capable of remembering past encounters. In the studio’s Middle-earth games, this allowed foes to rise through the ranks and enact revenge.

The patent itself – which you can view here – was originally filed back in 2016, before it was granted in 2021. It is dubbed “Nemesis characters, nemesis forts, social vendettas and followers in computer games”. As it stands, the patent has an expiration date of 11th August, 2036.

-

Crypto Mining Attack via ComfyUI/Ultralytics in 2024

https://github.com/ultralytics/ultralytics/issues/18037

zopieux on Dec 5, 2024 : Ultralytics was attacked (or did it on purpose, waiting for a post mortem there), 8.3.41 contains nefarious code downloading and running a crypto miner hosted as a GitHub blob.

-

Walt Disney Animation Abandons Longform Streaming Content

https://www.hollywoodreporter.com/business/business-news/tiana-disney-series-shelved-1236153297

A spokesperson confirmed there will be some layoffs in its Vancouver studio as a result of this shift in business strategy. In addition to the Tiana series, the studio is also scrapping an unannounced feature-length project that was set to go straight to Disney+.

Insiders say that Walt Disney Animation remains committed to releasing one theatrical film per year in addition to other shorts and special projects

-

Andreas Horn – The 9 algorithms

The illustration below highlights the algorithms most frequently utilized in our everyday activities: They play a key role in everything we do from online shopping recommendations, navigation apps, social media, email spam filters and even smart home devices.

🔹 𝗦𝗼𝗿𝘁𝗶𝗻𝗴 𝗔𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺

– Organize data for efficiency.

➜ Example: Sorting email threads or search results.

🔹 𝗗𝗶𝗷𝗸𝘀𝘁𝗿𝗮’𝘀 𝗔𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺

– Finds the shortest path in networks.

➜ Example: Google Maps driving routes.

🔹 𝗧𝗿𝗮𝗻𝘀𝗳𝗼𝗿𝗺𝗲𝗿𝘀

– AI models that understand context and meaning.

➜ Example: ChatGPT, Claude and other LLMs.

🔹 𝗟𝗶𝗻𝗸 𝗔𝗻𝗮𝗹𝘆𝘀𝗶𝘀

– Ranks pages and builds connections.

➜ Example: TikTok PageRank, LinkedIn recommendations.

🔹 𝗥𝗦𝗔 𝗔𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺

– Encrypts and secures data communication.

➜ Example: WhatsApp encryption or online banking.

🔹 𝗜𝗻𝘁𝗲𝗴𝗲𝗿 𝗙𝗮𝗰𝘁𝗼𝗿𝗶𝘇𝗮𝘁𝗶𝗼𝗻

– Secures cryptographic systems.

➜ Example: Protecting sensitive data in blockchain.

🔹 𝗖𝗼𝗻𝘃𝗼𝗹𝘂𝘁𝗶𝗼𝗻𝗮𝗹 𝗡𝗲𝘂𝗿𝗮𝗹 𝗡𝗲𝘁𝘄𝗼𝗿𝗸𝘀 (𝗖𝗡𝗡𝘀)

– Recognizes patterns in images and videos.

➜ Example: Facial recognition, object detection in self-driving cars.

🔹 𝗛𝘂𝗳𝗳𝗺𝗮𝗻 𝗖𝗼𝗱𝗶𝗻𝗴

– Compresses data efficiently.

➜ Example: JPEG and MP3 file compression.

🔹 𝗦𝗲𝗰𝘂𝗿𝗲 𝗛𝗮𝘀𝗵 𝗔𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺 (𝗦𝗛𝗔)

– Ensures data integrity.

➜ Example: Password encryption, digital signatures.

-

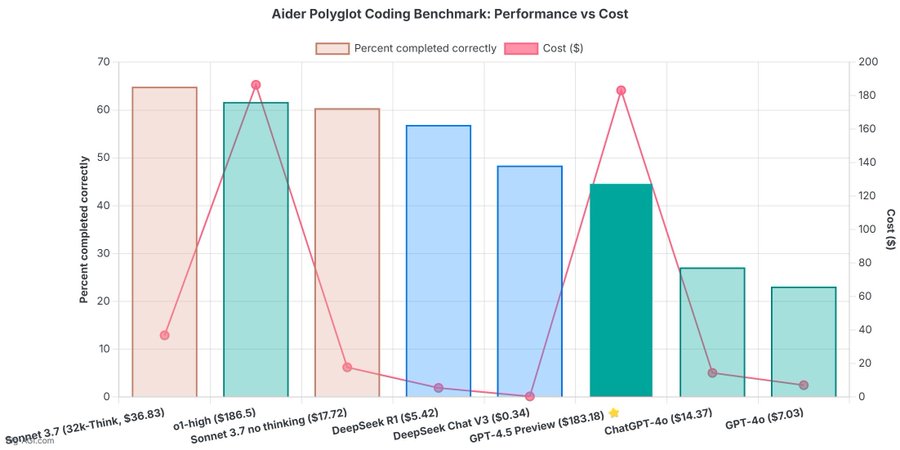

OpenAI 4.5 model arrives to mixed reviews

The verdict is in: OpenAI’s newest and most capable traditional AI model, GPT-4.5, is big, expensive, and slow, providing marginally better performance than GPT-4o at 30x the cost for input and 15x the cost for output. The new model seems to prove that longstanding rumors of diminishing returns in training unsupervised-learning LLMs were correct and that the so-called “scaling laws” cited by many for years have possibly met their natural end.

-

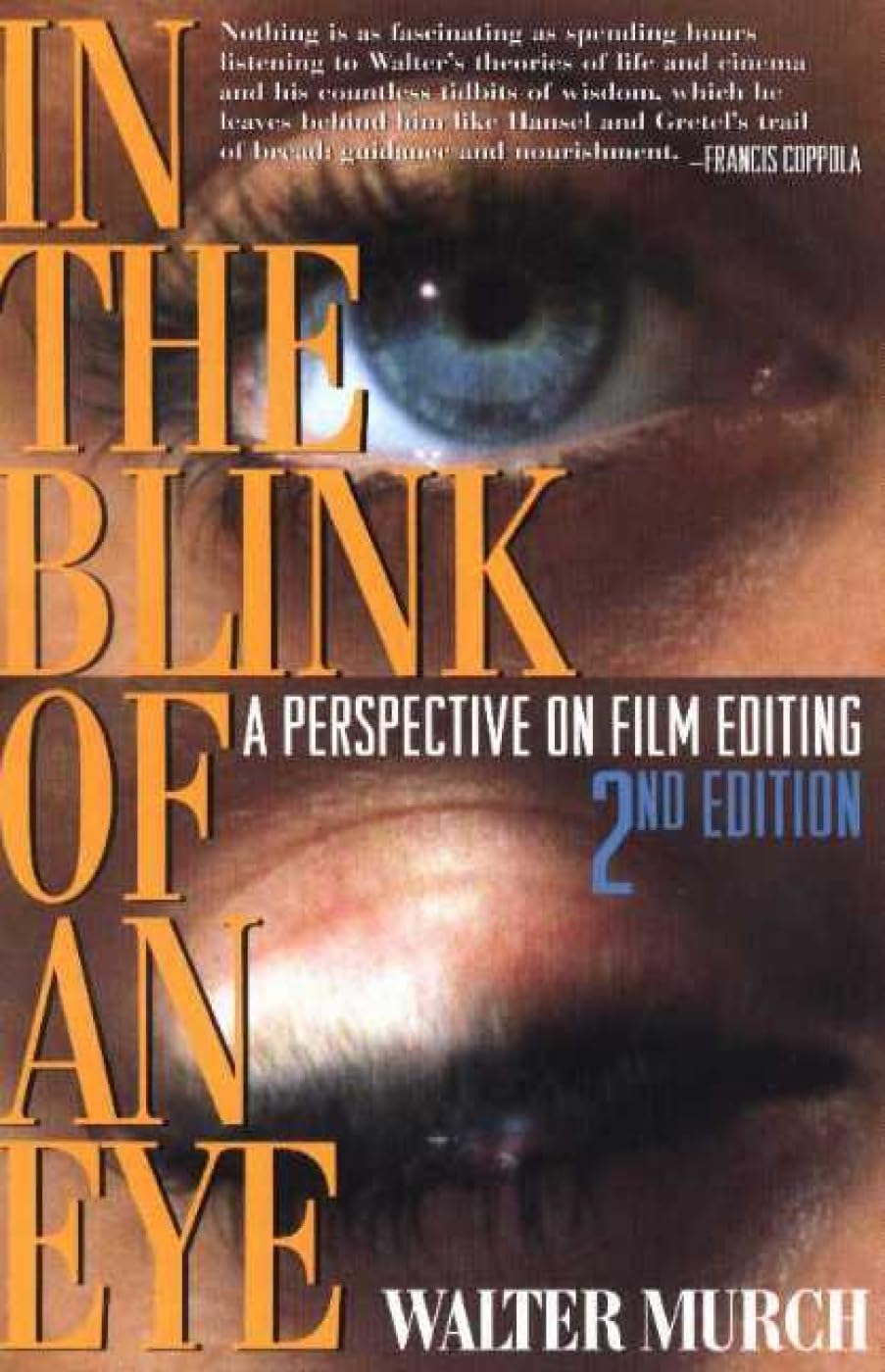

Walter Murch – “In the Blink of an Eye” – A perspective on cut editing

https://www.amazon.ca/Blink-Eye-Revised-2nd/dp/1879505622

Celebrated film editor Walter Murch’s vivid, multifaceted, thought-provoking essay on film editing. Starting with the most basic editing question — Why do cuts work? — Murch takes the reader on a wonderful ride through the aesthetics and practical concerns of cutting film. Along the way, he offers unique insights on such subjects as continuity and discontinuity in editing, dreaming, and reality; criteria for a good cut; the blink of the eye as an emotional cue; digital editing; and much more. In this second edition, Murch revises his popular first edition’s lengthy meditation on digital editing in light of technological changes. Francis Ford Coppola says about this book: “Nothing is as fascinating as spending hours listening to Walter’s theories of life, cinema and the countless tidbits of wisdom that he leaves behind like Hansel and Gretel’s trail of breadcrumbs…….”

Version 1.0.0 -

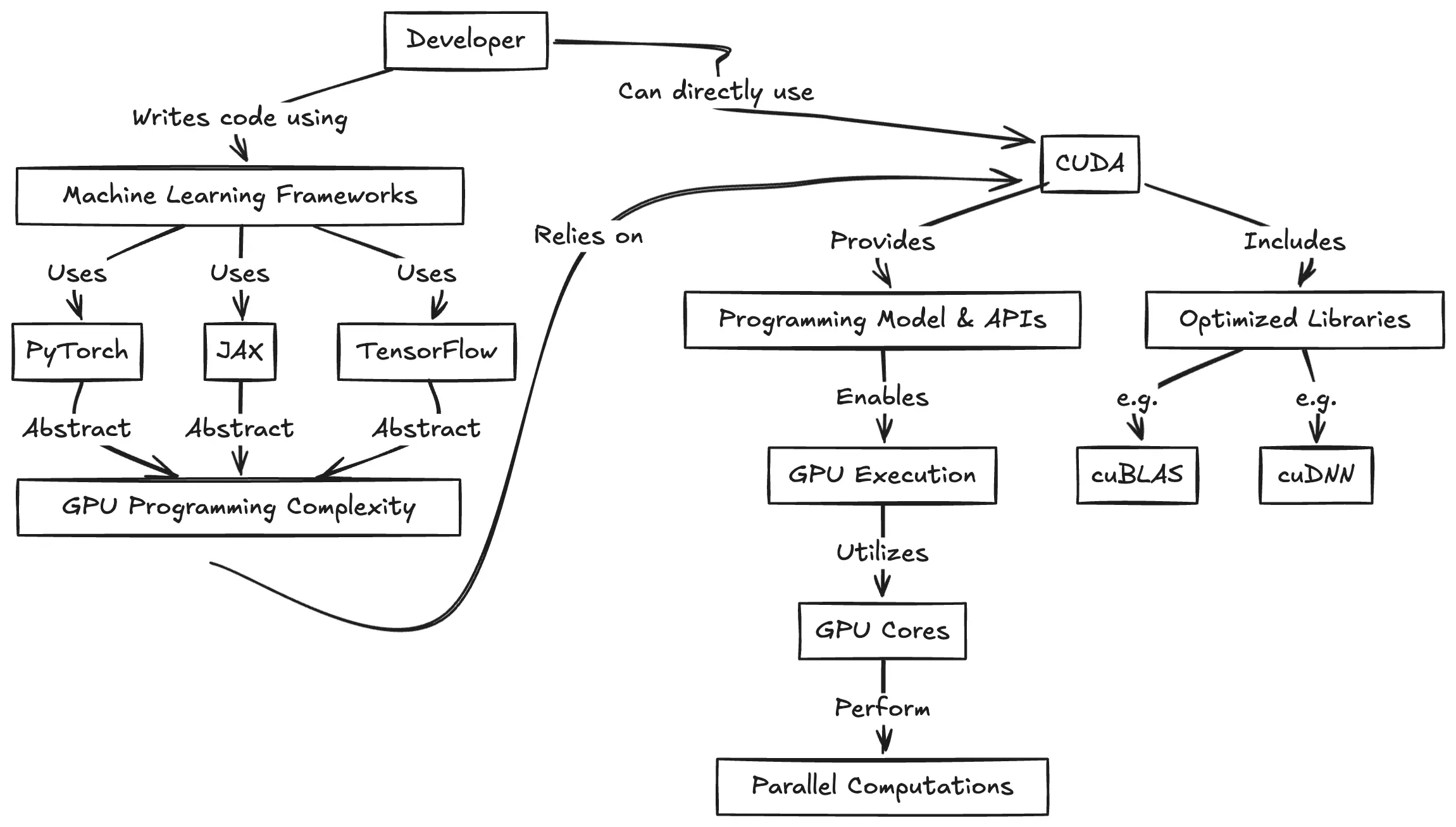

CUDA Programming for Python Developers

https://www.pyspur.dev/blog/introduction_cuda_programming

Check your Cuda version, it will be the release version here:

>>> nvcc --version nvcc: NVIDIA (R) Cuda compiler driver Copyright (c) 2005-2024 NVIDIA Corporation Built on Wed_Apr_17_19:36:51_Pacific_Daylight_Time_2024 Cuda compilation tools, release 12.5, V12.5.40 Build cuda_12.5.r12.5/compiler.34177558_0or from here:

>>> nvidia-smi Mon Jun 16 12:35:20 2025 +-----------------------------------------------------------------------------------------+ | NVIDIA-SMI 555.85 Driver Version: 555.85 CUDA Version: 12.5 | |-----------------------------------------+------------------------+----------------------+

FEATURED POSTS

-

Embedding frame ranges into Quicktime movies with FFmpeg

QuickTime (.mov) files are fundamentally time-based, not frame-based, and so don’t have a built-in, uniform “first frame/last frame” field you can set as numeric frame IDs. Instead, tools like Shotgun Create rely on the timecode track and the movie’s duration to infer frame numbers. If you want Shotgun to pick up a non-default frame range (e.g. start at 1001, end at 1064), you must bake in an SMPTE timecode that corresponds to your desired start frame, and ensure the movie’s duration matches your clip length.

How Shotgun Reads Frame Ranges

- Default start frame is 1. If no timecode metadata is present, Shotgun assumes the movie begins at frame 1.

- Timecode ⇒ frame number. Shotgun Create “honors the timecodes of media sources,” mapping the embedded TC to frame IDs. For example, a 24 fps QuickTime tagged with a start timecode of 00:00:41:17 will be interpreted as beginning on frame 1001 (1001 ÷ 24 fps ≈ 41.71 s).

Embedding a Start Timecode

QuickTime uses a

tmcd(timecode) track. You can bake in an SMPTE track via FFmpeg’s-timecodeflag or via Compressor/encoder settings:- Compute your start TC.

- Desired start frame = 1001

- Frame 1001 at 24 fps ⇒ 1001 ÷ 24 ≈ 41.708 s ⇒ TC 00:00:41:17

- FFmpeg example:

ffmpeg -i input.mov \ -c copy \ -timecode 00:00:41:17 \ output.movThis adds a timecode track beginning at 00:00:41:17, which Shotgun maps to frame 1001.

Ensuring the Correct End Frame

Shotgun infers the last frame from the movie’s duration. To end on frame 1064:

- Frame count = 1064 – 1001 + 1 = 64 frames

- Duration = 64 ÷ 24 fps ≈ 2.667 s

FFmpeg trim example:

ffmpeg -i input.mov \ -c copy \ -timecode 00:00:41:17 \ -t 00:00:02.667 \ output_trimmed.movThis results in a 64-frame clip (1001→1064) at 24 fps.

-

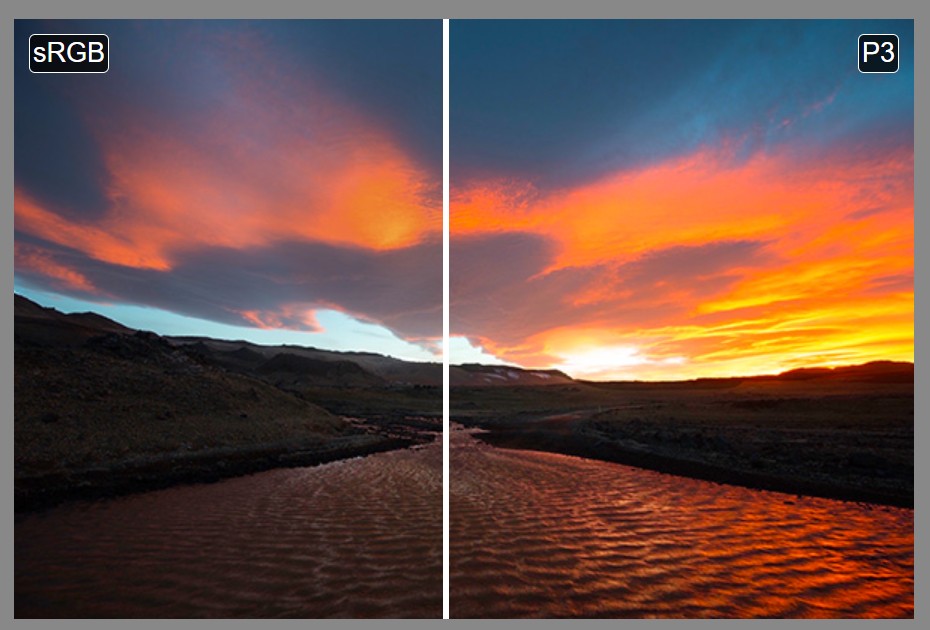

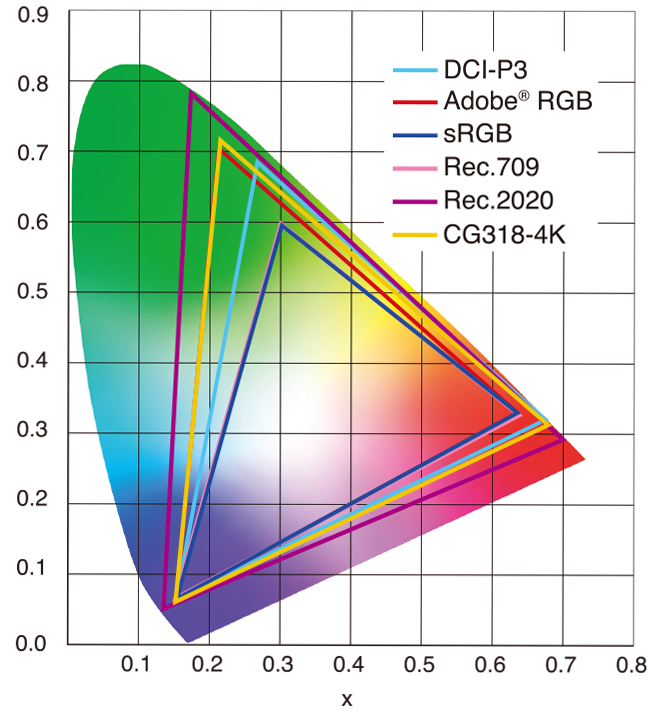

Scene Referred vs Display Referred color workflows

Display Referred it is tied to the target hardware, as such it bakes color requirements into every type of media output request.

Scene Referred uses a common unified wide gamut and targeting audience through CDL and DI libraries instead.

So that color information stays untouched and only “transformed” as/when needed.Sources:

– Victor Perez – Color Management Fundamentals & ACES Workflows in Nuke

– https://z-fx.nl/ColorspACES.pdf

– Wicus

-

sRGB vs REC709 – An introduction and FFmpeg implementations

1. Basic Comparison

- What they are

- sRGB: A standard “web”/computer-display RGB color space defined by IEC 61966-2-1. It’s used for most monitors, cameras, printers, and the vast majority of images on the Internet.

- Rec. 709: An HD-video color space defined by ITU-R BT.709. It’s the go-to standard for HDTV broadcasts, Blu-ray discs, and professional video pipelines.

- Why they exist

- sRGB: Ensures consistent colors across different consumer devices (PCs, phones, webcams).

- Rec. 709: Ensures consistent colors across video production and playback chains (cameras → editing → broadcast → TV).

- What you’ll see

- On your desktop or phone, images tagged sRGB will look “right” without extra tweaking.

- On an HDTV or video-editing timeline, footage tagged Rec. 709 will display accurate contrast and hue on broadcast-grade monitors.

2. Digging Deeper

Feature sRGB Rec. 709 White point D65 (6504 K), same for both D65 (6504 K) Primaries (x,y) R: (0.640, 0.330) G: (0.300, 0.600) B: (0.150, 0.060) R: (0.640, 0.330) G: (0.300, 0.600) B: (0.150, 0.060) Gamut size Identical triangle on CIE 1931 chart Identical to sRGB Gamma / transfer Piecewise curve: approximate 2.2 with linear toe Pure power-law γ≈2.4 (often approximated as 2.2 in practice) Matrix coefficients N/A (pure RGB usage) Y = 0.2126 R + 0.7152 G + 0.0722 B (Rec. 709 matrix) Typical bit-depth 8-bit/channel (with 16-bit variants) 8-bit/channel (10-bit for professional video) Usage metadata Tagged as “sRGB” in image files (PNG, JPEG, etc.) Tagged as “bt709” in video containers (MP4, MOV) Color range Full-range RGB (0–255) Studio-range Y′CbCr (Y′ [16–235], Cb/Cr [16–240])

Why the Small Differences Matter

(more…) - What they are