BREAKING NEWS

LATEST POSTS

-

Narcis Calin’s Galaxy Engine – A free, open source simulation software

This 2025 I decided to start learning how to code, so I installed Visual Studio and I started looking into C++. After days of watching tutorials and guides about the basics of C++ and programming, I decided to make something physics-related. I started with a dot that fell to the ground and then I wanted to simulate gravitational attraction, so I made 2 circles attracting each other. I thought it was really cool to see something I made with code actually work, so I kept building on top of that small, basic program. And here we are after roughly 8 months of learning programming. This is Galaxy Engine, and it is a simulation software I have been making ever since I started my learning journey. It currently can simulate gravity, dark matter, galaxies, the Big Bang, temperature, fluid dynamics, breakable solids, planetary interactions, etc. The program can run many tens of thousands of particles in real time on the CPU thanks to the Barnes-Hut algorithm, mixed with Morton curves. It also includes its own PBR 2D path tracer with BVH optimizations. The path tracer can simulate a bunch of stuff like diffuse lighting, specular reflections, refraction, internal reflection, fresnel, emission, dispersion, roughness, IOR, nested IOR and more! I tried to make the path tracer closer to traditional 3D render engines like V-Ray. I honestly never imagined I would go this far with programming, and it has been an amazing learning experience so far. I think that mixing this knowledge with my 3D knowledge can unlock countless new possibilities. In case you are curious about Galaxy Engine, I made it completely free and Open-Source so that anyone can build and compile it locally! You can find the source code in GitHub

https://github.com/NarcisCalin/Galaxy-Engine

-

Introduction to BytesIO

When you’re working with binary data in Python—whether that’s image bytes, network payloads, or any in-memory binary stream—you often need a file-like interface without touching the disk. That’s where

BytesIOfrom the built-iniomodule comes in handy. It lets you treat a bytes buffer as if it were a file.What Is

BytesIO?- Module:

io - Class:

BytesIO - Purpose:

- Provides an in-memory binary stream.

- Acts like a file opened in binary mode (

'rb'/'wb'), but data lives in RAM rather than on disk.

from io import BytesIO

Why Use

BytesIO?- Speed

- No disk I/O—reads and writes happen in memory.

- Convenience

- Emulates file methods (

read(),write(),seek(), etc.). - Ideal for testing code that expects a file-like object.

- Emulates file methods (

- Safety

- No temporary files cluttering up your filesystem.

- Integration

- Libraries that accept file-like objects (e.g., PIL,

requests) will work withBytesIO.

- Libraries that accept file-like objects (e.g., PIL,

Basic Examples

1. Writing Bytes to a Buffer

(more…)from io import BytesIO # Create a BytesIO buffer buffer = BytesIO() # Write some binary data buffer.write(b'Hello, \xF0\x9F\x98\x8A') # includes a smiley emoji in UTF-8 # Retrieve the entire contents data = buffer.getvalue() print(data) # b'Hello, \xf0\x9f\x98\x8a' print(data.decode('utf-8')) # Hello, 😊 # Always close when done buffer.close() - Module:

-

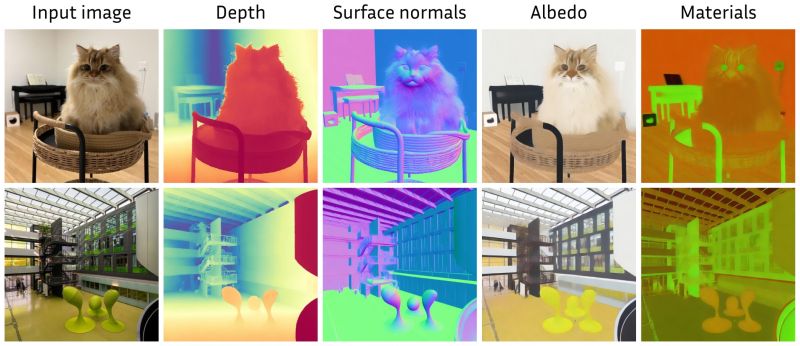

Marigold – repurposing diffusion-based image generators for dense predictions

Marigold repurposes Stable Diffusion for dense prediction tasks such as monocular depth estimation and surface normal prediction, delivering a level of detail often missing even in top discriminative models.

Key aspects that make it great:

– Reuses the original VAE and only lightly fine-tunes the denoising UNet

– Trained on just tens of thousands of synthetic image–modality pairs

– Runs on a single consumer GPU (e.g., RTX 4090)

– Zero-shot generalization to real-world, in-the-wild imageshttps://mlhonk.substack.com/p/31-marigold

https://arxiv.org/pdf/2505.09358

https://marigoldmonodepth.github.io/

-

Runway Aleph

https://runwayml.com/research/introducing-runway-aleph

Generate New Camera Angles

Generate the Next Shot

Use Any Style to Transfer to a Video

Change Environments, Locations, Seasons and Time of Day

Add Things to a Scene

Remove Things from a Scene

Change Objects in a Scene

Apply the Motion of a Video to an Image

Alter a Character’s Appearance

Recolor Elements of a Scene

Relight Shots

Green Screen Any Object, Person or Situation -

Mike Wong – AtoMeow – A Blue noise image stippling in Processing

https://github.com/mwkm/atoMeow

https://www.shadertoy.com/view/7s3XzX

This demo is created for coders who are familiar with this awesome creative coding platform. You may quickly modify the code to work for video or to stipple your own Procssing drawings by turning them into

PImageand run the simulation. This demo code also serves as a reference implementation of my article Blue noise sampling using an N-body simulation-based method. If you are interested in 2.5D, you may mod the code to achieve what I discussed in this artist friendly article.Convert your video to a dotted noise.

-

Aitor Echeveste – Free CG and Comp Projection Shot, Download the Assets & Follow the Workflow

What’s Included:

- Cleaned and extended base plates

- Full Maya and Nuke 3D projection layouts

- Bullet and environment CG renders with AOVs (RGB, normals, position, ID, etc.)

- Explosion FX in slow motion

- 3D scene geometry for projection

- Camera + lensing setup

- Light groups and passes for look development

FEATURED POSTS

-

Feed Your OWN Documents to a Local Large Language Model AI engine

RAG (retrieval augmented generation)

-

sRGB vs REC709 – An introduction and FFmpeg implementations

1. Basic Comparison

- What they are

- sRGB: A standard “web”/computer-display RGB color space defined by IEC 61966-2-1. It’s used for most monitors, cameras, printers, and the vast majority of images on the Internet.

- Rec. 709: An HD-video color space defined by ITU-R BT.709. It’s the go-to standard for HDTV broadcasts, Blu-ray discs, and professional video pipelines.

- Why they exist

- sRGB: Ensures consistent colors across different consumer devices (PCs, phones, webcams).

- Rec. 709: Ensures consistent colors across video production and playback chains (cameras → editing → broadcast → TV).

- What you’ll see

- On your desktop or phone, images tagged sRGB will look “right” without extra tweaking.

- On an HDTV or video-editing timeline, footage tagged Rec. 709 will display accurate contrast and hue on broadcast-grade monitors.

2. Digging Deeper

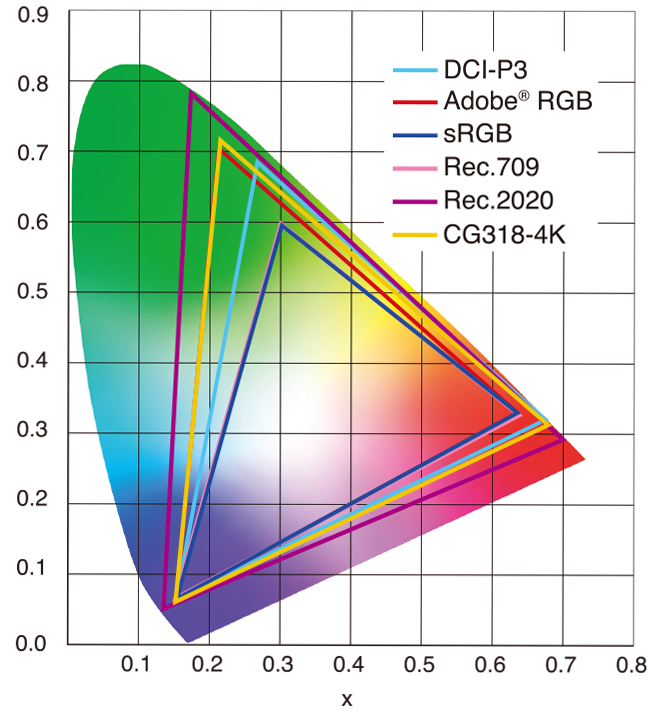

Feature sRGB Rec. 709 White point D65 (6504 K), same for both D65 (6504 K) Primaries (x,y) R: (0.640, 0.330) G: (0.300, 0.600) B: (0.150, 0.060) R: (0.640, 0.330) G: (0.300, 0.600) B: (0.150, 0.060) Gamut size Identical triangle on CIE 1931 chart Identical to sRGB Gamma / transfer Piecewise curve: approximate 2.2 with linear toe Pure power-law γ≈2.4 (often approximated as 2.2 in practice) Matrix coefficients N/A (pure RGB usage) Y = 0.2126 R + 0.7152 G + 0.0722 B (Rec. 709 matrix) Typical bit-depth 8-bit/channel (with 16-bit variants) 8-bit/channel (10-bit for professional video) Usage metadata Tagged as “sRGB” in image files (PNG, JPEG, etc.) Tagged as “bt709” in video containers (MP4, MOV) Color range Full-range RGB (0–255) Studio-range Y′CbCr (Y′ [16–235], Cb/Cr [16–240])

Why the Small Differences Matter

(more…) - What they are

-

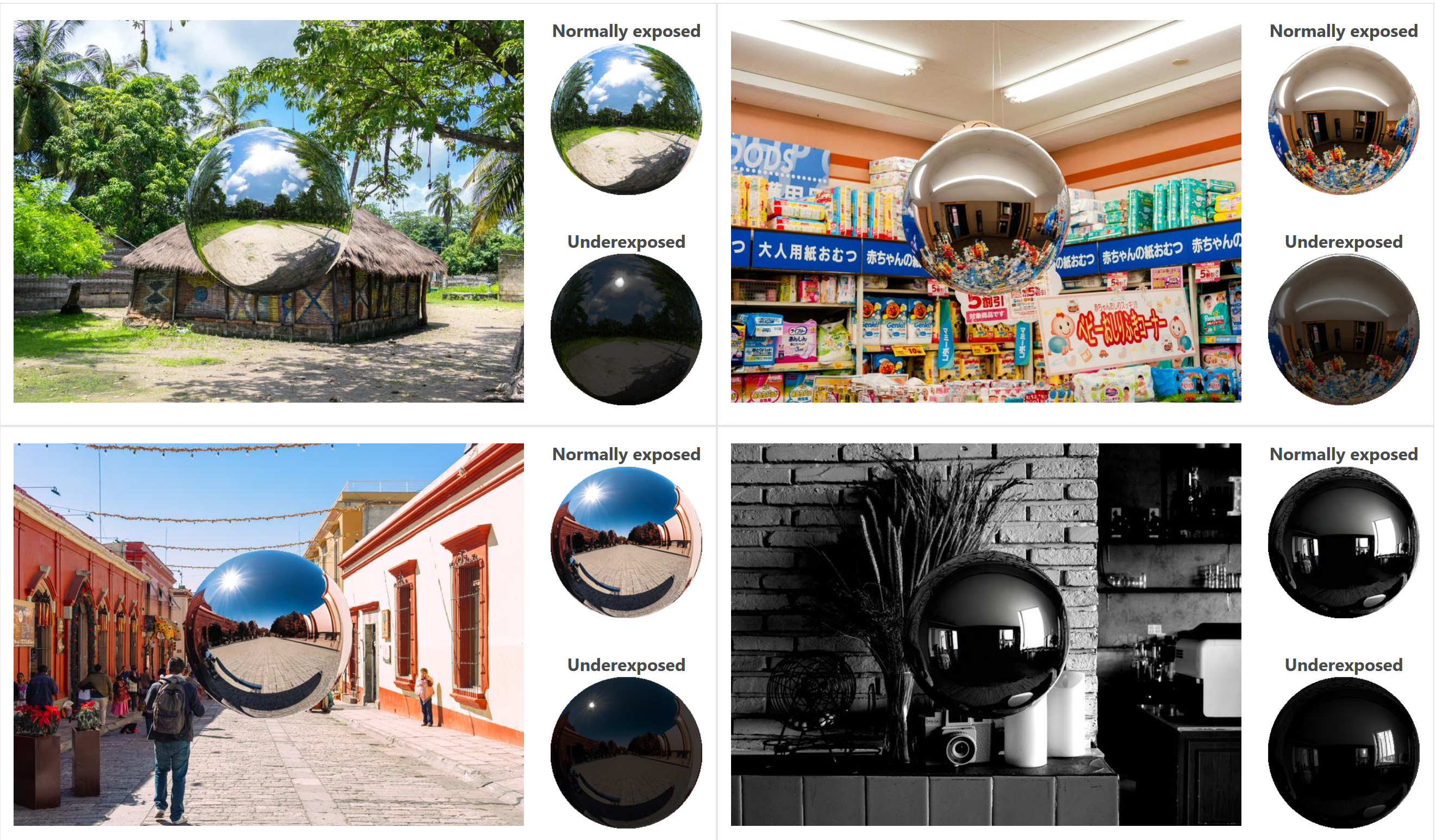

DiffusionLight: HDRI Light Probes for Free by Painting a Chrome Ball

https://diffusionlight.github.io/

https://github.com/DiffusionLight/DiffusionLight

https://github.com/DiffusionLight/DiffusionLight?tab=MIT-1-ov-file#readme

https://colab.research.google.com/drive/15pC4qb9mEtRYsW3utXkk-jnaeVxUy-0S

“a simple yet effective technique to estimate lighting in a single input image. Current techniques rely heavily on HDR panorama datasets to train neural networks to regress an input with limited field-of-view to a full environment map. However, these approaches often struggle with real-world, uncontrolled settings due to the limited diversity and size of their datasets. To address this problem, we leverage diffusion models trained on billions of standard images to render a chrome ball into the input image. Despite its simplicity, this task remains challenging: the diffusion models often insert incorrect or inconsistent objects and cannot readily generate images in HDR format. Our research uncovers a surprising relationship between the appearance of chrome balls and the initial diffusion noise map, which we utilize to consistently generate high-quality chrome balls. We further fine-tune an LDR difusion model (Stable Diffusion XL) with LoRA, enabling it to perform exposure bracketing for HDR light estimation. Our method produces convincing light estimates across diverse settings and demonstrates superior generalization to in-the-wild scenarios.”