BREAKING NEWS

LATEST POSTS

-

VR-NeRF: High-Fidelity Virtualized Walkable Spaces

An end-to-end system for the high-fidelity capture, model reconstruction, and real-time rendering of walkable spaces in virtual reality using neural radiance fields.

-

Epic Games Store Still Makes No Money

https://80.lv/articles/epic-games-store-still-makes-no-money/

Recently, Epic Games has encountered financial challenges, leading to significant steps. Towards the end of September, the company laid off around 16% of its staff, which is approximately 830 employees. Subsequently, in early October, Epic Games announced a price hike for non-game developers utilizing Unreal Engine. During this announcement, CEO Tim Sweeney openly acknowledged the financial difficulties that the studio has faced since July.

-

Quiet Quitting at work – Causes and remedies

Quiet quitting isn’t about leaving a job.

It’s when people stay but mentally check out. They do the bare minimum. No excitement. No extra effort.It’s a silent alarm. Your team may be losing interest right under your nose.

And it’s a big deal. Why?

- Because it affects:

- Your team’s morale

- Your team’s productivity

- Your company’s profitability

- And everyone’s overall success

- Resources are already stretched thin.

- You need to get the best from your team.

What can employers do? Many of the causes are within your control:

➡️ Listen Well

Talk to your team often.

Listen to what they say. Then take action.➡️ Recognize Efforts

Public recognition can boost morale.

A simple “thank you” goes a long way.➡️ Promote Balance

Allow time for life outside work.

Overworked employees burn out.➡️ Give Chances to Grow

Invest in them. Provide training.

Show them a career path.➡️ Build a Positive Culture

Ensure everyone feels valued and respected.➡️ Set Clear Goals

Clearly define roles. Tell them what you expect.➡️ Lead by Example

Show excitement. Work hard.

Be the way you want them to be.Quiet quitting isn’t just an employee issue. It’s a leadership opportunity. It’s a chance to re-engage, re-inspire, and revitalize your workplace.

Resources

https://www.cbsnews.com/news/workers-disengaged-quiet-quitting-their-jobs-gallup/

https://www.gallup.com/workplace/349484/state-of-the-global-workplace.aspx

-

Canon RF 5.2mm f2.8L Dual Fisheye EOS VR System for VR photography and editing

As part of the EOS VR System – this lens paired with the EOS R5 updated with firmware 1.5.0 or higher and one of Canon’s VR software solutions – you can create immersive 3D that can be experienced when viewed on compatible head mount displays including the Oculus Quest 2 and more. Viewers will be able to take in the scene with a vivid, wide field of view by simply moving their head. This is the world’s first digital interchangeable lens that can capture stereoscopic 3D 180° VR imagery to a single image sensor.

The pairing of this lens and the EOS R5 camera brings high resolution video recording at up to 8K DCI 30p and 4K DCI 60p.

(more…) -

AI and the Law – TV/Theatrical 2023 – SAG-AFTRA Contract and Regulating AI

https://www.sagaftra.org/files/sa_documents/AI%20TVTH.pdf

https://www.sagaftra.org/files/sa_documents/TV-Theatrical_23_Summary_Agreement_Final.pdf

Mike Seymour : A Deep Dive into the New Laws around Governing AI

https://www.fxguide.com/fxpodcasts/fxpodcast-361-a-deep-dive-into-the-new-laws-around-governing-ai/Local copies

Studios Align With Big Tech in a Risky Bet

Under U.S. copyright law, directors and writers are not entitled to some rights that exist in other countries, including the U.K., France and Italy. This is because the contributions of writers and directors in America are typically considered “works-made-for-hire,” which establishes creators as employees and producers as the owner of any copyright.

FEATURED POSTS

-

AI and the Law – CartoonBrew.com : Lionsgate signs deal with AI company Runway, hoping that AI can eliminate storyboard artists and VFX crews

The goal is to reduce costs by replacing traditional storyboard artists and VFX crews with AI-generated “cinematic video.” Lionsgate hopes to use this technology for both pre- and post-production processes. While the company promotes the cost-saving potential, the creative community has raised concerns, as Runway is currently facing a lawsuit over copyright infringement.

-

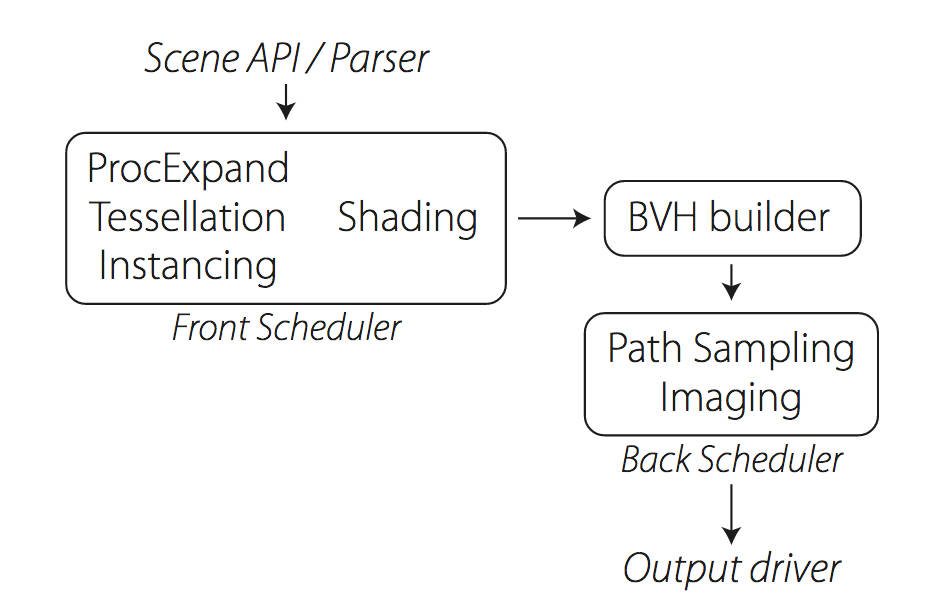

Weta Digital – Manuka Raytracer and Gazebo GPU renderers – pipeline

https://jo.dreggn.org/home/2018_manuka.pdf

http://www.fxguide.com/featured/manuka-weta-digitals-new-renderer/

The Manuka rendering architecture has been designed in the spirit of the classic reyes rendering architecture. In its core, reyes is based on stochastic rasterisation of micropolygons, facilitating depth of field, motion blur, high geometric complexity,and programmable shading.

This is commonly achieved with Monte Carlo path tracing, using a paradigm often called shade-on-hit, in which the renderer alternates tracing rays with running shaders on the various ray hits. The shaders take the role of generating the inputs of the local material structure which is then used bypath sampling logic to evaluate contributions and to inform what further rays to cast through the scene.

Over the years, however, the expectations have risen substantially when it comes to image quality. Computing pictures which are indistinguishable from real footage requires accurate simulation of light transport, which is most often performed using some variant of Monte Carlo path tracing. Unfortunately this paradigm requires random memory accesses to the whole scene and does not lend itself well to a rasterisation approach at all.

Manuka is both a uni-directional and bidirectional path tracer and encompasses multiple importance sampling (MIS). Interestingly, and importantly for production character skin work, it is the first major production renderer to incorporate spectral MIS in the form of a new ‘Hero Spectral Sampling’ technique, which was recently published at Eurographics Symposium on Rendering 2014.

Manuka propose a shade-before-hit paradigm in-stead and minimise I/O strain (and some memory costs) on the system, leveraging locality of reference by running pattern generation shaders before we execute light transport simulation by path sampling, “compressing” any bvh structure as needed, and as such also limiting duplication of source data.

The difference with reyes is that instead of baking colors into the geometry like in Reyes, manuka bakes surface closures. This means that light transport is still calculated with path tracing, but all texture lookups etc. are done up-front and baked into the geometry.The main drawback with this method is that geometry has to be tessellated to its highest, stable topology before shading can be evaluated properly. As such, the high cost to first pixel. Even a basic 4 vertices square becomes a much more complex model with this approach.

Manuka use the RenderMan Shading Language (rsl) for programmable shading [Pixar Animation Studios 2015], but we do not invoke rsl shaders when intersecting a ray with a surface (often called shade-on-hit). Instead, we pre-tessellate and pre-shade all the input geometry in the front end of the renderer.

This way, we can efficiently order shading computations to sup-port near-optimal texture locality, vectorisation, and parallelism. This system avoids repeated evaluation of shaders at the same surface point, and presents a minimal amount of memory to be accessed during light transport time. An added benefit is that the acceleration structure for ray tracing (abounding volume hierarchy, bvh) is built once on the final tessellated geometry, which allows us to ray trace more efficiently than multi-level bvhs and avoids costly caching of on-demand tessellated micropolygons and the associated scheduling issues.For the shading reasons above, in terms of AOVs, the studio approach is to succeed at combining complex shading with ray paths in the render rather than pass a multi-pass render to compositing.

For the Spectral Rendering component. The light transport stage is fully spectral, using a continuously sampled wavelength which is traced with each path and used to apply the spectral camera sensitivity of the sensor. This allows for faithfully support any degree of observer metamerism as the camera footage they are intended to match as well as complex materials which require wavelength dependent phenomena such as diffraction, dispersion, interference, iridescence, or chromatic extinction and Rayleigh scattering in participating media.

As opposed to the original reyes paper, we use bilinear interpolation of these bsdf inputs later when evaluating bsdfs per pathv ertex during light transport4. This improves temporal stability of geometry which moves very slowly with respect to the pixel raster

In terms of the pipeline, everything rendered at Weta was already completely interwoven with their deep data pipeline. Manuka very much was written with deep data in mind. Here, Manuka not so much extends the deep capabilities, rather it fully matches the already extremely complex and powerful setup Weta Digital already enjoy with RenderMan. For example, an ape in a scene can be selected, its ID is available and a NUKE artist can then paint in 3D say a hand and part of the way up the neutral posed ape.

We called our system Manuka, as a respectful nod to reyes: we had heard a story froma former ILM employee about how reyes got its name from how fond the early Pixar people were of their lunches at Point Reyes, and decided to name our system after our surrounding natural environment, too. Manuka is a kind of tea tree very common in New Zealand which has very many very small leaves, in analogy to micropolygons ina tree structure for ray tracing. It also happens to be the case that Weta Digital’s main site is on Manuka Street.