BREAKING NEWS

LATEST POSTS

-

Apple launches Final Cut Camera app to support multicam productions

https://www.theverge.com/2024/5/7/24151109/apple-final-cut-camera-app-support-multicam-ipad

Apple has released Final Cut Camera for iPhone and iPad, allowing filmmakers to take video and stream it live back to an iPad for a multicam shoot. The updated Final Cut 2 app allows users to can control each Final Cut Camera-running device connected to it with a multiscreen view. Users can switch between production and editing anytime to live-cut their projects in the new version.

-

The 3 Body Problem and the case against Determinism

It’s becoming clear that deterministic physics cannot easily answer all aspects of nature, at astronomical and biological level.

Is this a limitation in modern mathematics and/or tools. Or an actual barrier?

The 𝐓𝐡𝐫𝐞𝐞-𝐁𝐨𝐝𝐲 𝐏𝐫𝐨𝐛𝐥𝐞𝐦 is one of the most enduring challenges in celestial mechanics, addressing the complex motion of three celestial bodies interacting under gravity. Governed by Newton’s laws of motion and the law of universal gravitation, it seeks to predict the paths of the bodies based on their masses, positions, and velocities. While the Two-Body Problem has exact solutions described by Kepler’s laws, introducing a third body leads to a nonlinear system of equations with no general analytical solution. This complexity arises from the chaotic interactions between the bodies, where even minute changes in initial conditions can lead to vastly different trajectories—a key aspect of chaos theory.

Historically, the Three-Body Problem has fascinated some of the greatest scientific minds. Isaac Newton laid its foundation, but it was Joseph-Louis Lagrange and Leonhard Euler who discovered specific cases with periodic or predictable solutions. Lagrange identified the Lagrange points, stable positions where the gravitational forces and motion of the three bodies balance, while Euler found collinear solutions, where the bodies align on a single line periodically. These solutions, though special cases, have profound implications for space exploration, such as identifying stable regions for satellites orbits.

Despite the chaotic nature of the Three-Body Problem, researchers have discovered periodic solutions where the bodies follow repetitive paths, returning to their original positions after a fixed time. In the 1970s, Michel Hénon, Roger A. Broucke, and George Hadjidemetriou identified a fascinating family of such solutions, now known as the Broucke–Hénon–Hadjidemetriou family. These solutions often involve symmetric and elegant trajectories, such as the figure-eight orbit, where three equal-mass bodies chase each other along a shared path resembling the number eight.

Other periodic solutions include equilateral triangle configurations (where the bodies maintain a triangular shape while rotating or oscillating) and collinear periodic orbits (where the bodies periodically align and reverse directions). These solutions highlight the intricate balance between gravitational forces and motion, offering glimpses of stability within the chaos.

While the Three-Body Problem laid the groundwork for understanding gravitational interactions, the study of higher n-body problems reveals the rich and chaotic dynamics of larger systems, offering critical insights into both cosmic structures and practical applications like orbital dynamics. -

James Gerde – The way the leaves dance in the rain

https://www.instagram.com/gerdegotit/reel/C6s-2r2RgSu/

Since spending a lot of time recently with SDXL I’ve since made my way back to SD 1.5

While the models overall have less fidelity. There is just no comparing to the current motion models we have available for animatediff with 1.5 models.

To date this is one of my favorite pieces. Not because I think it’s even the best it can be. But because the workflow adjustments unlocked some very important ideas I can’t wait to try out.

Performance by @silkenkelly and @itxtheballerina on IG

-

How the VFX industry is recovering from last year’s strikes

Jonathan Bronfman, CEO at MARZ, tells us: “I don’t think the industry will ever be the same. It will recover slowly in 2024. The streaming wars cost studios too much money and now they are all reevaluating their strategies.”

He notes that AI will play a big role in how things shake out. “Technology is pushing out the traditional approach, something which is long overdue. Studios in Hollywood have been operating the same way for decades, and now AI will move them off their pedestal.

“The entire industry is in for a reckoning. I think studios would have come to this realisation eventually, so it was inevitable, but I think the pressure from the strikes accelerated this.”

https://www.vfxwire.com/how-the-vfx-industry-is-recovering-from-last-years-strikes/

-

TLDR Newsletter – Keep up with tech in 5 minutes

Get the free daily email with summaries of the most interesting stories in startups, tech, and programming!

FEATURED POSTS

-

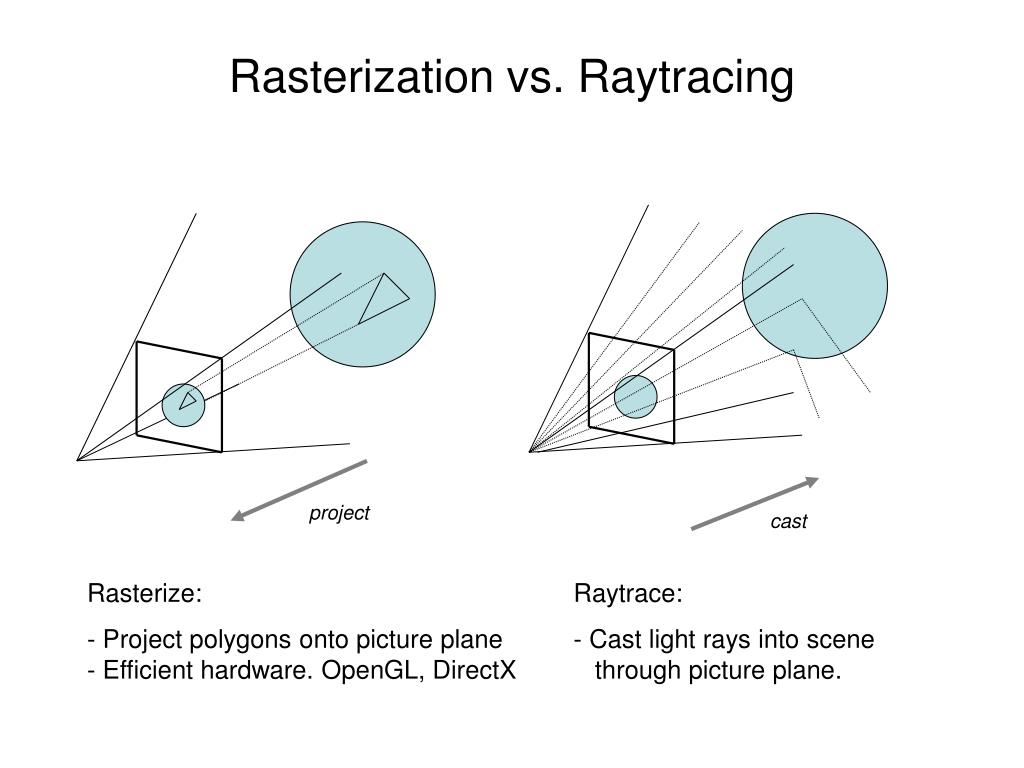

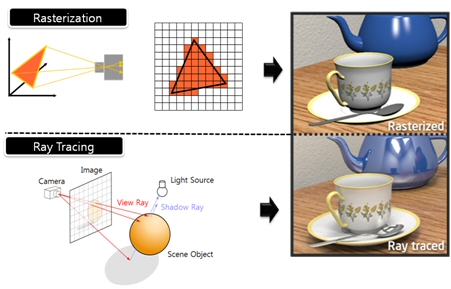

What’s the Difference Between Ray Casting, Ray Tracing, Path Tracing and Rasterization? Physical light tracing…

RASTERIZATION

Rasterisation (or rasterization) is the task of taking the information described in a vector graphics format OR the vertices of triangles making 3D shapes and converting them into a raster image (a series of pixels, dots or lines, which, when displayed together, create the image which was represented via shapes), or in other words “rasterizing” vectors or 3D models onto a 2D plane for display on a computer screen.For each triangle of a 3D shape, you project the corners of the triangle on the virtual screen with some math (projective geometry). Then you have the position of the 3 corners of the triangle on the pixel screen. Those 3 points have texture coordinates, so you know where in the texture are the 3 corners. The cost is proportional to the number of triangles, and is only a little bit affected by the screen resolution.

In computer graphics, a raster graphics or bitmap image is a dot matrix data structure that represents a generally rectangular grid of pixels (points of color), viewable via a monitor, paper, or other display medium.

With rasterization, objects on the screen are created from a mesh of virtual triangles, or polygons, that create 3D models of objects. A lot of information is associated with each vertex, including its position in space, as well as information about color, texture and its “normal,” which is used to determine the way the surface of an object is facing.

Computers then convert the triangles of the 3D models into pixels, or dots, on a 2D screen. Each pixel can be assigned an initial color value from the data stored in the triangle vertices.

Further pixel processing or “shading,” including changing pixel color based on how lights in the scene hit the pixel, and applying one or more textures to the pixel, combine to generate the final color applied to a pixel.

The main advantage of rasterization is its speed. However, rasterization is simply the process of computing the mapping from scene geometry to pixels and does not prescribe a particular way to compute the color of those pixels. So it cannot take shading, especially the physical light, into account and it cannot promise to get a photorealistic output. That’s a big limitation of rasterization.

There are also multiple problems:

If you have two triangles one is behind the other, you will draw twice all the pixels. you only keep the pixel from the triangle that is closer to you (Z-buffer), but you still do the work twice.

The borders of your triangles are jagged as it is hard to know if a pixel is in the triangle or out. You can do some smoothing on those, that is anti-aliasing.

You have to handle every triangles (including the ones behind you) and then see that they do not touch the screen at all. (we have techniques to mitigate this where we only look at triangles that are in the field of view)

Transparency is hard to handle (you can’t just do an average of the color of overlapping transparent triangles, you have to do it in the right order)

RAY CASTING

It is almost the exact reverse of rasterization: you start from the virtual screen instead of the vector or 3D shapes, and you project a ray, starting from each pixel of the screen, until it intersect with a triangle.The cost is directly correlated to the number of pixels in the screen and you need a really cheap way of finding the first triangle that intersect a ray. In the end, it is more expensive than rasterization but it will, by design, ignore the triangles that are out of the field of view.

You can use it to continue after the first triangle it hit, to take a little bit of the color of the next one, etc… This is useful to handle the border of the triangle cleanly (less jagged) and to handle transparency correctly.

RAYTRACING

Same idea as ray casting except once you hit a triangle you reflect on it and go into a different direction. The number of reflection you allow is the “depth” of your ray tracing. The color of the pixel can be calculated, based off the light source and all the polygons it had to reflect off of to get to that screen pixel.The easiest way to think of ray tracing is to look around you, right now. The objects you’re seeing are illuminated by beams of light. Now turn that around and follow the path of those beams backwards from your eye to the objects that light interacts with. That’s ray tracing.

Ray tracing is eye-oriented process that needs walking through each pixel looking for what object should be shown there, which is also can be described as a technique that follows a beam of light (in pixels) from a set point and simulates how it reacts when it encounters objects.

Compared with rasterization, ray tracing is hard to be implemented in real time, since even one ray can be traced and processed without much trouble, but after one ray bounces off an object, it can turn into 10 rays, and those 10 can turn into 100, 1000…The increase is exponential, and the the calculation for all these rays will be time consuming.

Historically, computer hardware hasn’t been fast enough to use these techniques in real time, such as in video games. Moviemakers can take as long as they like to render a single frame, so they do it offline in render farms. Video games have only a fraction of a second. As a result, most real-time graphics rely on the another technique called rasterization.

PATH TRACING

Path tracing can be used to solve more complex lighting situations.

Path tracing is a type of ray tracing. When using path tracing for rendering, the rays only produce a single ray per bounce. The rays do not follow a defined line per bounce (to a light, for example), but rather shoot off in a random direction. The path tracing algorithm then takes a random sampling of all of the rays to create the final image. This results in sampling a variety of different types of lighting.When a ray hits a surface it doesn’t trace a path to every light source, instead it bounces the ray off the surface and keeps bouncing it until it hits a light source or exhausts some bounce limit.

It then calculates the amount of light transferred all the way to the pixel, including any color information gathered from surfaces along the way.

It then averages out the values calculated from all the paths that were traced into the scene to get the final pixel color value.It requires a ton of computing power and if you don’t send out enough rays per pixel or don’t trace the paths far enough into the scene then you end up with a very spotty image as many pixels fail to find any light sources from their rays. So when you increase the the samples per pixel, you can see the image quality becomes better and better.

Ray tracing tends to be more efficient than path tracing. Basically, the render time of a ray tracer depends on the number of polygons in the scene. The more polygons you have, the longer it will take.

Meanwhile, the rendering time of a path tracer can be indifferent to the number of polygons, but it is related to light situation: If you add a light, transparency, translucence, or other shader effects, the path tracer will slow down considerably.blogs.nvidia.com/blog/2018/03/19/whats-difference-between-ray-tracing-rasterization/

https://en.wikipedia.org/wiki/Rasterisation

https://www.quora.com/Whats-the-difference-between-ray-tracing-and-path-tracing

-

HDRI shooting and editing by Xuan Prada and Greg Zaal

www.xuanprada.com/blog/2014/11/3/hdri-shooting

http://blog.gregzaal.com/2016/03/16/make-your-own-hdri/

http://blog.hdrihaven.com/how-to-create-high-quality-hdri/

Shooting checklist

- Full coverage of the scene (fish-eye shots)

- Backplates for look-development (including ground or floor)

- Macbeth chart for white balance

- Grey ball for lighting calibration

- Chrome ball for lighting orientation

- Basic scene measurements

- Material samples

- Individual HDR artificial lighting sources if required

Methodology

(more…)