RANDOM POSTs

-

Filmatick – THE ESSENTIAL SOFTWARE FOR PRE-VISUALIZATION OF FILM AND MEDIA

Read more: Filmatick – THE ESSENTIAL SOFTWARE FOR PRE-VISUALIZATION OF FILM AND MEDIAScript Mapping, Scene-by-Scene Breakdown, Set & Character Development, Animation, Lighting, Music, offline editing and Sound Effects.

-

-

Convert between light exposure and intensity

Read more: Convert between light exposure and intensityimport math,sys def Exposure2Intensity(exposure): exp = float(exposure) result = math.pow(2,exp) print(result) Exposure2Intensity(0) def Intensity2Exposure(intensity): inarg = float(intensity) if inarg == 0: print("Exposure of zero intensity is undefined.") return if inarg < 1e-323: inarg = max(inarg, 1e-323) print("Exposure of negative intensities is undefined. Clamping to a very small value instead (1e-323)") result = math.log(inarg, 2) print(result) Intensity2Exposure(0.1)Why Exposure?

Exposure is a stop value that multiplies the intensity by 2 to the power of the stop. Increasing exposure by 1 results in double the amount of light.

Artists think in “stops.” Doubling or halving brightness is easy math and common in grading and look-dev.

Exposure counts doublings in whole stops:- +1 stop = ×2 brightness

- −1 stop = ×0.5 brightness

This gives perceptually even controls across both bright and dark values.

Why Intensity?

Intensity is linear.

It’s what render engines and compositors expect when:- Summing values

- Averaging pixels

- Multiplying or filtering pixel data

Use intensity when you need the actual math on pixel/light data.

Formulas (from your Python)

- Intensity from exposure: intensity = 2**exposure

- Exposure from intensity: exposure = log₂(intensity)

Guardrails:

- Intensity must be > 0 to compute exposure.

- If intensity = 0 → exposure is undefined.

- Clamp tiny values (e.g.

1e−323) before using log₂.

Use Exposure (stops) when…

- You want artist-friendly sliders (−5…+5 stops)

- Adjusting look-dev or grading in even stops

- Matching plates with quick ±1 stop tweaks

- Tweening brightness changes smoothly across ranges

Use Intensity (linear) when…

- Storing raw pixel/light values

- Multiplying textures or lights by a gain

- Performing sums, averages, and filters

- Feeding values to render engines expecting linear data

Examples

- +2 stops → 2**2 = 4.0 (×4)

- +1 stop → 2**1 = 2.0 (×2)

- 0 stop → 2**0 = 1.0 (×1)

- −1 stop → 2**(−1) = 0.5 (×0.5)

- −2 stops → 2**(−2) = 0.25 (×0.25)

- Intensity 0.1 → exposure = log₂(0.1) ≈ −3.32

Rule of thumb

Think in stops (exposure) for controls and matching.

Compute in linear (intensity) for rendering and math. -

-

Walt Disney Animation Amps Up Production With New Vancouver Studio

Read more: Walt Disney Animation Amps Up Production With New Vancouver Studiohttps://www.awn.com/blog/blame-canada-and-covid

deadline.com/2021/08/walt-disney-animation-studios-vancouver-studio-what-if-1234809175/

Effective next year, Walt Disney Animation Studios is throwing the doors open to a new facility in Vancouver, BC that will focus on long-form series and special projects for Disney+. The first in the pipeline is the anticipated, feature-quality musical series Moana.

-

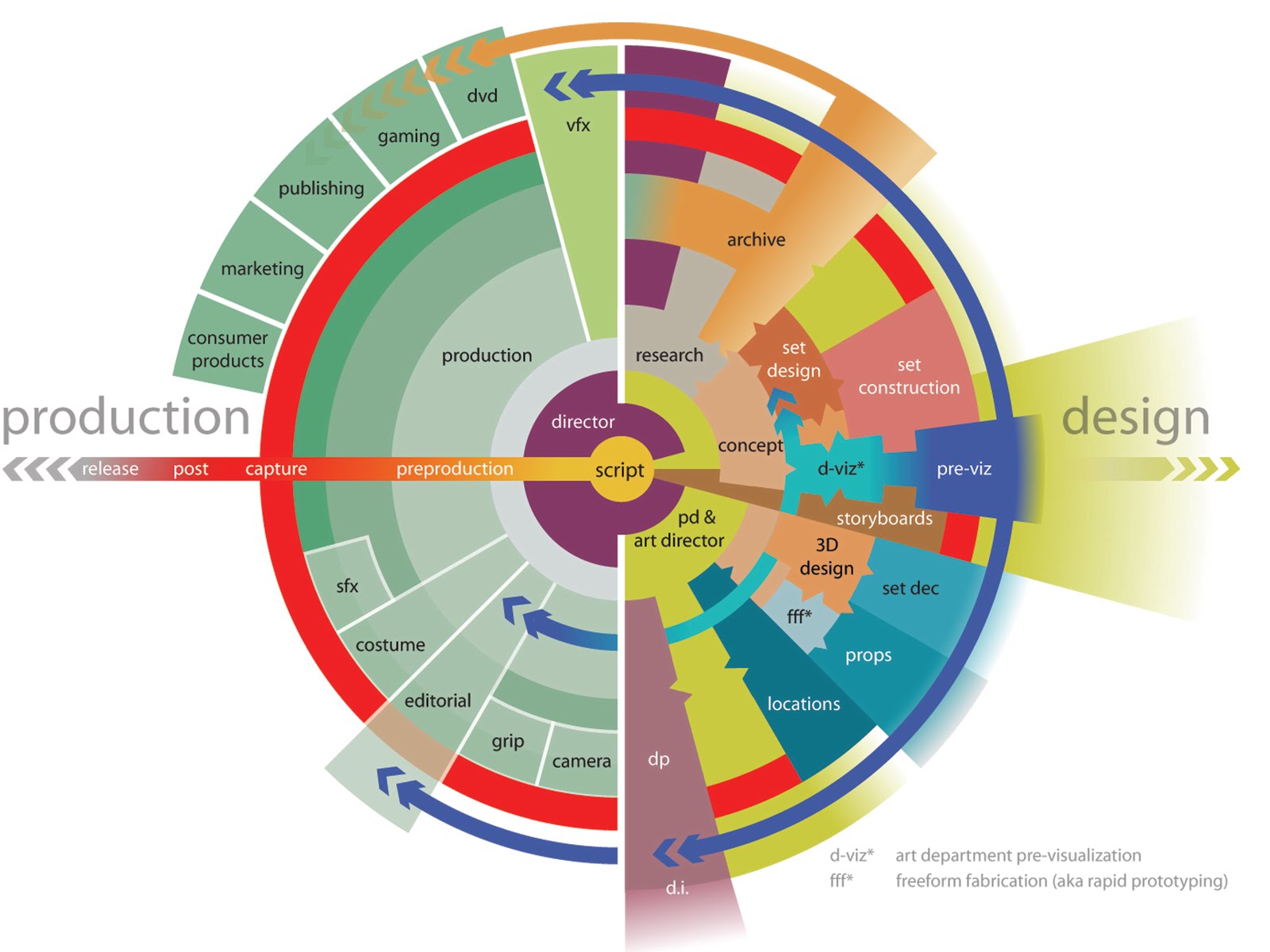

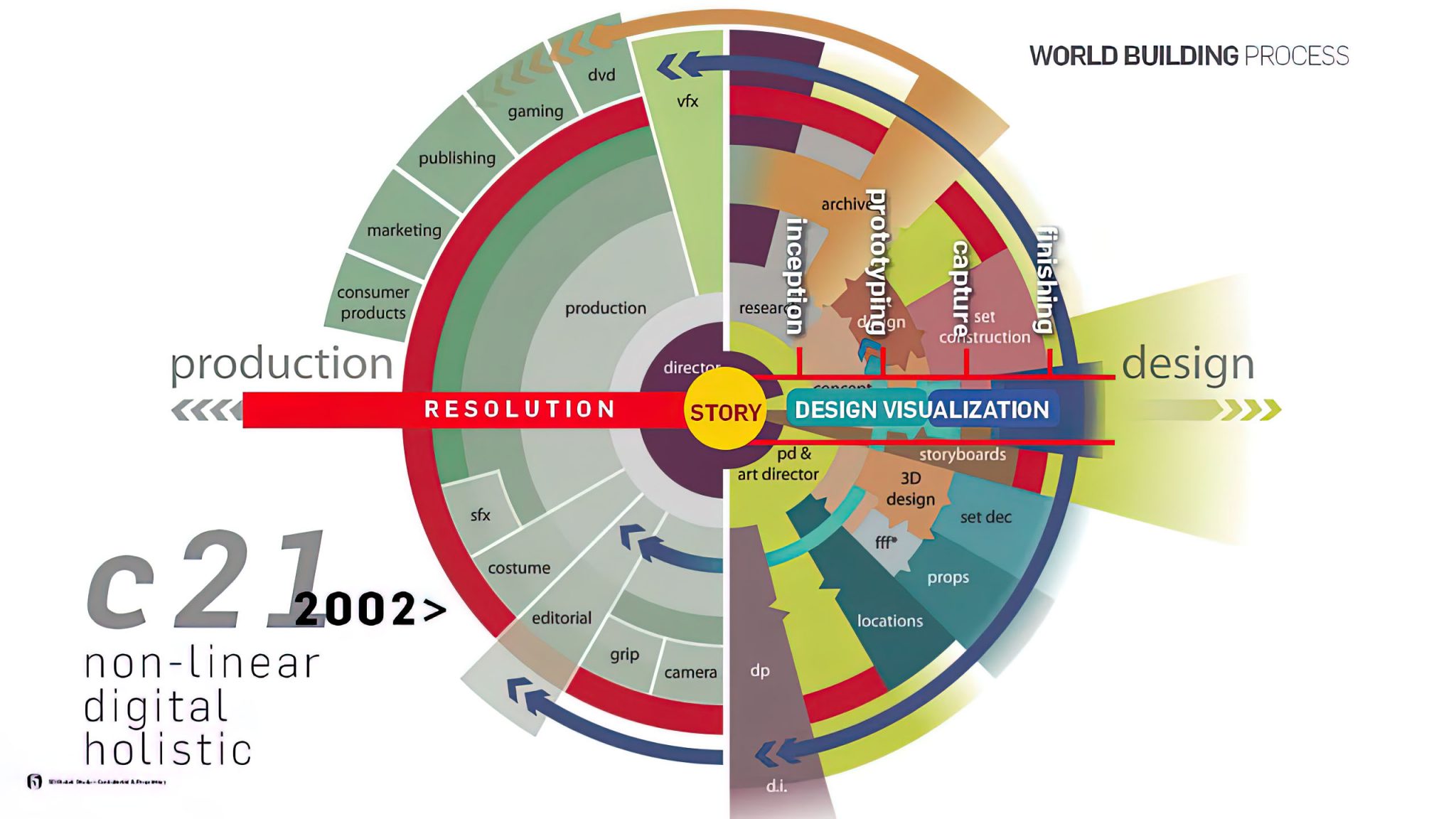

Alex McDowell’s mandala for non-linear virtual production

Read more: Alex McDowell’s mandala for non-linear virtual productionspring2013animationseminar.wordpress.com/2013/03/16/march-27-alex-mcdowell/

www.rinascimentodigitale.it/transmedia.html

“I think that it’s important now for people coming into the entertainment or pop culture business to know that all bets are off, … We don’t necessarily know that film making as we know it will exist in few years.

We don’t know that gaming is going to look the way it (now) looks or TV is going to look the way it looks. There is no doubt that there is convergence happening through these various media”

-

GIFStream – 4D Gaussian-based Immersive Video with Feature Stream

Read more: GIFStream – 4D Gaussian-based Immersive Video with Feature Streamhttps://xdimlab.github.io/GIFStream/

Immersive video offers a 6-Dof-free viewing experience, potentially playing a key role in future video technology. Recently, 4D Gaussian Splatting has gained attention as an effective approach for immersive video due to its high rendering efficiency and quality, though maintaining quality with manageable storage remains challenging. To address this, we introduce GIFStream, a novel 4D Gaussian representation using a canonical space and a deformation field enhanced with time-dependent feature streams. These feature streams enable complex motion modeling and allow efficient compression by leveraging their motion-awareness and temporal correspondence. Additionally, we incorporate both temporal and spatial compression networks for endto-end compression.

Experimental results show that GIFStream delivers high-quality immersive video at 30 Mbps, with real-time rendering and fast decoding on an RTX 4090.

-

Anders Langlands – Render Color Spaces

Read more: Anders Langlands – Render Color Spaceshttps://www.colour-science.org/anders-langlands/

This page compares images rendered in Arnold using spectral rendering and different sets of colourspace primaries: Rec.709, Rec.2020, ACES and DCI-P3. The SPD data for the GretagMacbeth Color Checker are the measurements of Noburu Ohta, taken from Mansencal, Mauderer and Parsons (2014) colour-science.org.

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.